Without a shared storage it is quite hard to deploy a reasonable test scenario. Within vSphere 5 VMware introduced the vSphere Storage Appliance (VSA). The VSA transforms the local storage from up to 3 servers into a mirrored shared storage. This sounds really great for a testing environment because it supports plenty VMware Features like vMotion, HA and DRS.

Prior to installation there are a few things to check because the VSA has very strict system requirements. As it is only a testing environment and I do not consider getting support, so the main goal is getting the VSA up and running. The server requirements are:

- 6GB RAM

- 2GHz CPU

- 4 NICs

- Identical configuration across all nodes

- Clean ESXi 5.0 Installation

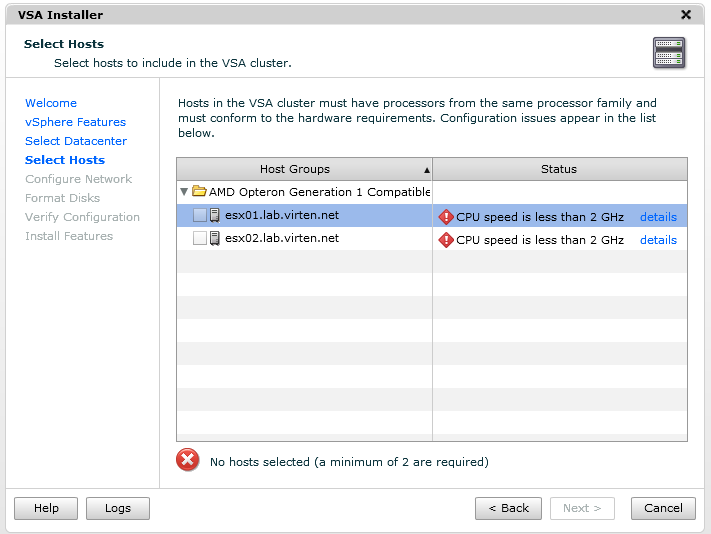

I deliberately ignored all the vendor/model or hardware raid controller requirements, as this are only soft-requirements. The HP Proliant N40L supports all above requirements, except the 2GHz CPU. But there is a little XML File which contains the host audit configuration the installer uses during the installation. I am going to tweak this file a little bit to get the installation done.

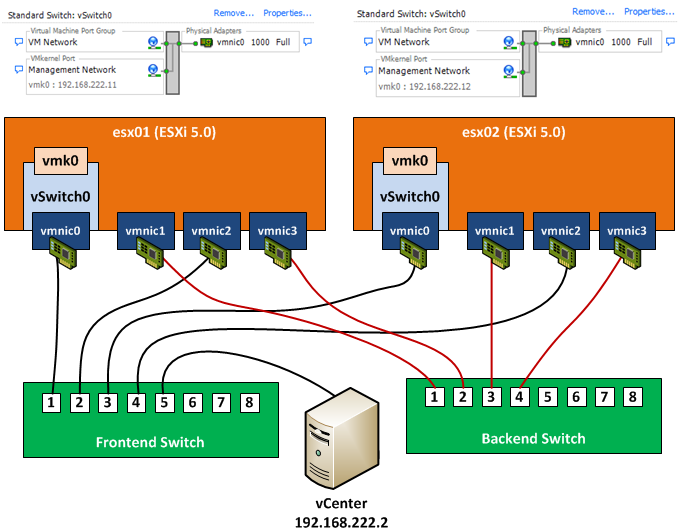

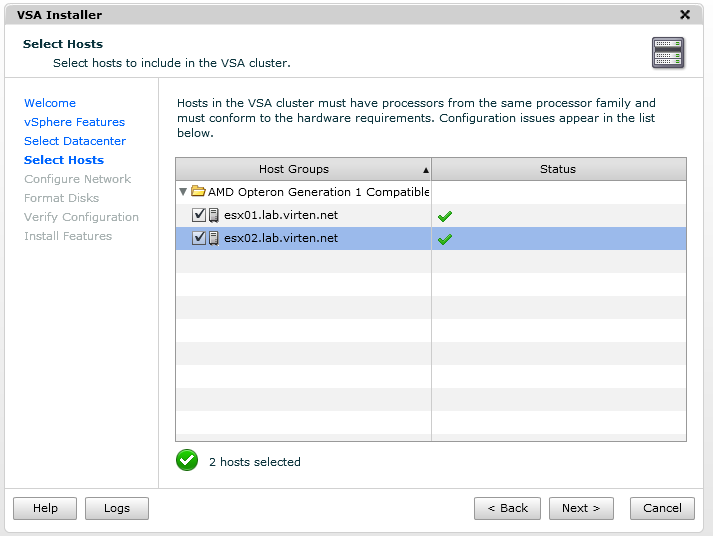

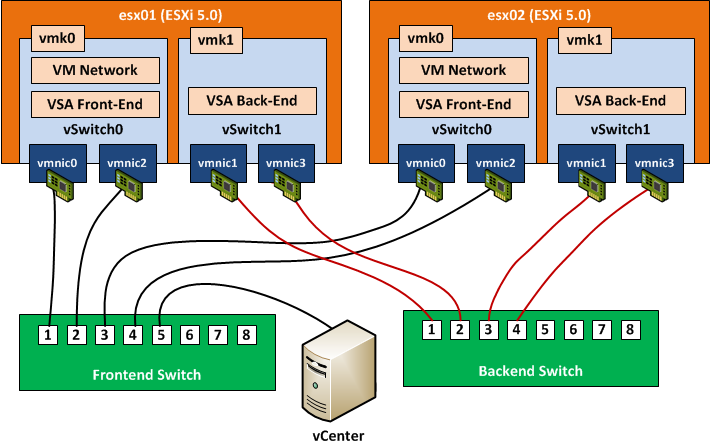

The environment I use for the installation contains 2 ESXi 5.0 hosts and a vCenter. The ESXi hosts are configured only with one IP address. No further changes are made and no VMs are running, as these are requirements. The following diagram shows the deployment.

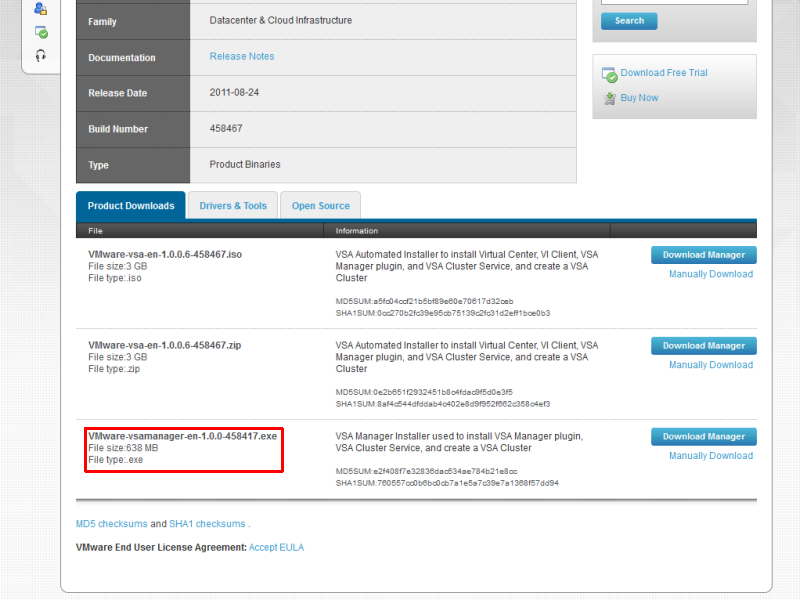

VMware offers 3 packages to download and install the storage appliance. A combined Image including the vCenter and VSA (.iso or .zip) and a standalone installer. My vCenter is already up and running so I only need the vsamanager. The VSA download is included along with the vSphere Package.

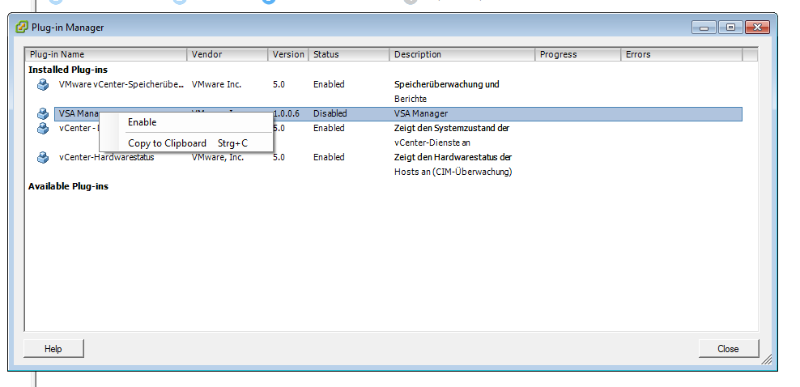

The installation is pretty straight forward. Only the vCenters IP address and license key has to be filled in. After the installation has finished, start the vSphere Client. The VSA Manager is a Plugin which needs to be activated. To activate, go to Plugins -> Manage Plugins…

If successful, a new tab called “VSA Manager” appears at the datacenter level. As said earlier, because of the 2GHz limitation there is a little tweak to get the installation finished. If not applied, the installer stops during hardware audit: CPU speed is less than 2GHz

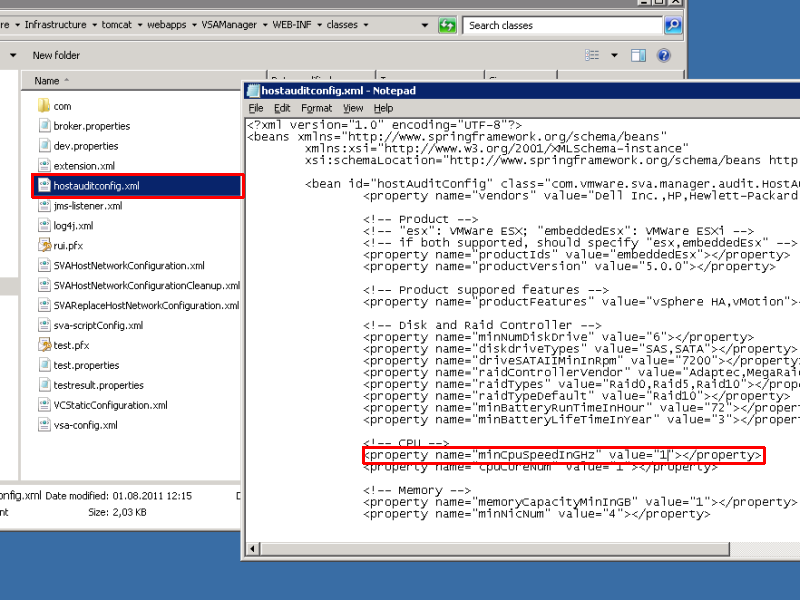

The installer uses a XML configuration file located at the vCenter Server:

C:\Program Files\VMware\Infrastructure\tomcat\webapps\VSAManager\WEB-INF\classes\hostauditconfig.xml

Open this file in Notepad and set the minCpuSpeedInGHz value to 1.

Reboot vCenter Server!

After the server has been rebootet VSA audit should succeed and the installation can be completed.

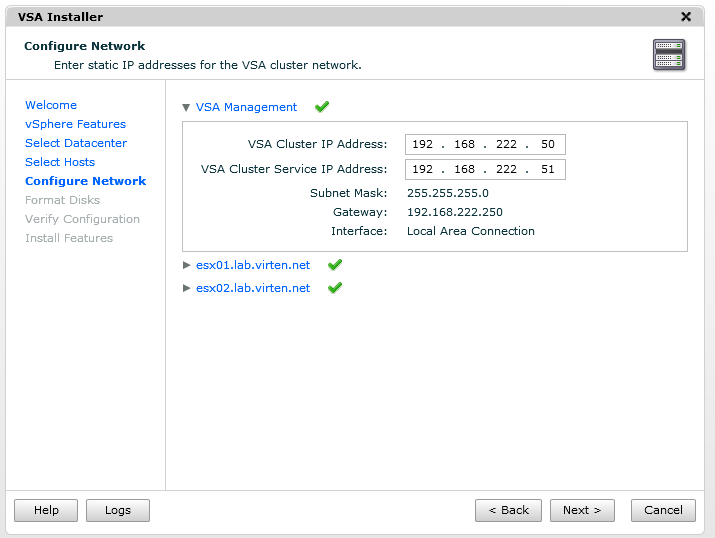

The following page is for the IP configuration. Here is an explanation of the IP addresses used:

VSA Management

- VSA Cluster IP Address: Shared Management IP Address. This address is used by the vCenter

- VSA Cluster Service IP address: Only available in a 2-node configuration

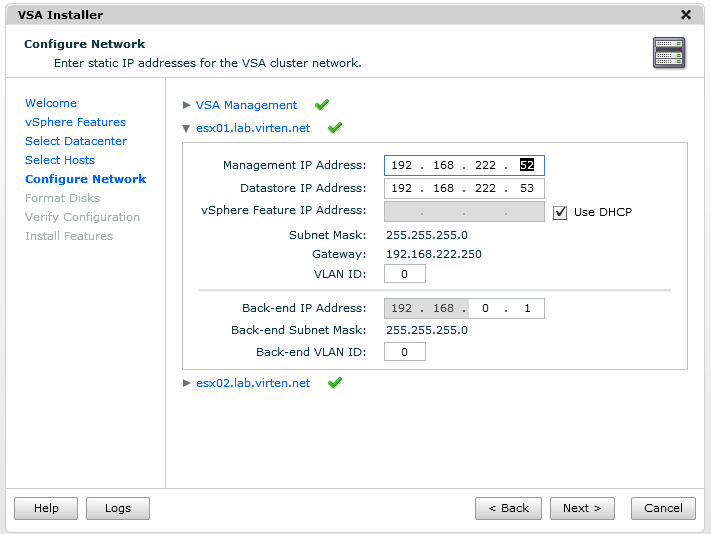

Nodes

- Management IP Address: IP address used for communication between the host and heartbeat

- Datastore IP Address: NFS datastore address mapped to each VSA Appliance

- vSphere Feature IP Address: ESXi vmk address for vMotion

- Back-end IP Address: Used for replication and cluster communication

Be aware that the management IP address, vCenter IP address and NFS address must share the same subnet.

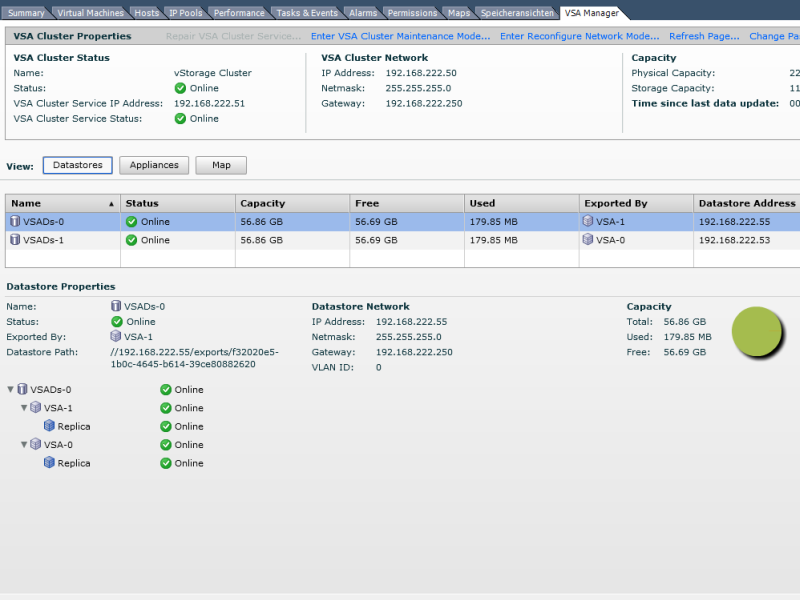

The installation should now succeed without any errors and will end up with 2 shared datastores, both reduced by 50% of its capacity since it is mirrored. The VSA Manager tab offers a great configuration overview.

Conclusion

Installing the vSphere Storage Appliance on HPs N40L is a great option to get protected shard storage for your test environment. Additionally you can stop wasting local storage. As the VSA supports plenty vSphere features and products it is definitely a valid solution beside dedicated shared storage.

So what do u do when the trial for vsa and vcenter expire? Start all over again? Do you have two n40l's for the vsa feature?

In my case the N40L is not the environment for testing day-to-day administration tasks, so i don't bother if it is down or i have to start over. In my homelab i am usually testing new stuff, so i prefer to have a clean new installation.

If your company is a VMware Partner, you should be eligible to get a NFR license which can be used to keep testserver up for longer time.

I have a similar configuration, with port 1 and 2 connected to an dedicated backend switch. This is not covered in VMwares vSphere Storage Appliance Networking Guide. During the VSA installation one host gets disconnected and the installation fails. How do you get it up and running?

My configuration is neither coverd in the networking guide nor in the Installer. I changed the vmk1 (vMotion) Address later to resolve routing problems. Just set vmk1 on both servers to another subnet.

Do you have an interconnect between both switches? If not, this may be the failure. The VSA installer uses NIC 1 & 3 for management, not NIC 1 & 2 (See network diagram). This will break the connection between vCenter and ESX Server. Just swap NIC 2 & 3 and retry.

How many physical machines (N40L) do you use in this lab, one or two? Or do you use two virtual ESXi inside one N40L machine?

All of these questions are due to the four NICs used in this setting, as I couldn't figure out where are the 4 NICs coming from?

Thanks

Hi.

I'm trying to set this same scenario with two nodes. But I don't have another switch to handle the back-end network. Is is possible to accomplish this by using crossover cables?

Also... I was wondering if it's possible to add another vSwitch with an extra NIC to connect to another VMNetwork?. This is to host VMs which are in another segment (DMZ as example).

Thanks

The second switch can be a VLAN on the first switch too. You can also use the same switch and same VLAN for everything. Not best practice, but no technical issue.

As you have 2 Ports, you can't use crossover cables. All ports have to talk together.

You can add additional vSwitches when the VSA installation is finished. No problem with that. With a later Version (That post was VSA 1.0) you can also use the "Brownfield" installation path, where you setup physical networking completely by yourself.

Ok, got it. I will try.

Yes, I read about the "Brownfield" installation with recent versions. I'm using 1.0.

Thanks!

Wouldn't this configuration not be able to handle a switch failure?

The reason to use two switches is also to be able to handle switch failure, with your wiring, if you lose front end switch, you've lost all your front end traffic?