One problem that comes up quite often during VMware Virtual SAN Beta testing is that the Network status keeps in the "Misconfiguration detected" state. Sometimes the cluster also shows up with different "Network Partition Groups". This message can be caused by several problems. In this post i am going through the most commonly pitfalls and how to solve them.![]()

VSAN Version

First to check is that the vCenter and all ESXi Host are on the latest version. The refreshed version of the beta has fixed some networking related bugs. Download the latest version at the VSAN Beta Community.

- VMware vCenter Server Version 5.5.0 Build 1440532

- VMware ESXi 5.5.0 1439689

Configuration Sequence

Make sure that you do not add ESXi Hosts without VSAN activated network adapters to the Cluster. Use the following configuration sequence to avoid problems:

- Configure a VMkernel Port on the ESXi Host

- Add ESXi Hosts to the Cluster

- Activate Virtual SAN in the Cluster

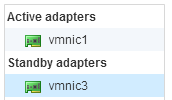

Failover

Configure physical adapters related to Virtual SAN Traffic Port Groups as Active/Standby. In some cases it works with Active/Active, but I've also seen problems with it.

Consistent VMkernel Port Configuration

Inconsistent VMkernel port configuration can cause problems. Use the following best practice guidelines:

- Use the same Uplink Adapters on each ESXi Host

- Use the same amount of Virtual SAN activated VMkernel Ports on each ESXi Host

- Do not use multiple VMkernel Ports per ESXi Host in the same subnet

Verify Multicast Traffic

In some environments, multicast traffic does not work out of the box. Sometimes it is disabled or blocked. Talk to you network admins and verify that Multicast Traffic (IGMP snooping) is enabled.

Virtual SAN uses two Multicast Groups. The first group is used for the master/backup communication, the second is used by the agents:

- Agent Group Multicast Address: 224.2.3.4

- Master Group Multicast Address: 224.1.2.3

You can use tcpdump to quickly verify that you can see multicast traffic from all ESXi Hosts. Use zypper install tcpdump to install tcpdump on a vSphere Management Assistent (vMA). In my case, i am verifying multicast traffic for 4 ESXi Hosts:

vma:~ # tcpdump -n dst host 224.1.2.3 or dst host 224.2.3.4 tcpdump: verbose output suppressed, use -v or -vv for full protocol decode listening on eth0, link-type EN10MB (Ethernet), capture size 65535 bytes 20:11:25.536741 IP 192.168.0.21.25344 > 224.2.3.4.23451: UDP, length 184 20:11:25.677146 IP 192.168.0.23.37978 > 224.2.3.4.23451: UDP, length 272 20:11:26.021094 IP 192.168.0.22.40675 > 224.1.2.3.12345: UDP, length 184 20:11:26.104572 IP 192.168.0.24.49734 > 224.1.2.3.12345: UDP, length 184 20:11:26.536781 IP 192.168.0.21.25344 > 224.2.3.4.23451: UDP, length 184 20:11:26.676983 IP 192.168.0.23.37978 > 224.2.3.4.23451: UDP, length 272 20:11:27.021015 IP 192.168.0.22.40675 > 224.1.2.3.12345: UDP, length 184 20:11:27.104347 IP 192.168.0.24.49734 > 224.1.2.3.12345: UDP, length 184 ^C

Nested Virtual SAN

When you run Virtual SAN on virtualized ESXi host and your Cluster is partitioned: Migrate all nested ESXi hosts to the same physical ESXi Host. That should quickly solve the problem, without the need to troubleshoot the network infrastructure.

I have a 4 node vSAN cluster. When I look at the multicast information on the switches I see all 4 nodes with the agent multicast address, but I only see 2 nodes with the master multicast address. Is this normal behavior?

you can use tcpdump built in esxi

tcpdump-uw -i vmk8 -n -s0 -t -c 20 udp port 12345 or udp port 23451

tcpdump-uw: verbose output suppressed, use -v or -vv for full protocol decode

listening on vmk8, link-type EN10MB (Ethernet), capture size 65535 bytes

IP 172.16.233.147.14598 > 224.2.3.4.23451: UDP, length 200

IP 172.16.233.146.56330 > 224.2.3.4.23451: UDP, length 200

IP 172.16.233.166.59135 > 224.1.2.3.12345: UDP, length 200

IP 172.16.233.117.48725 > 224.2.3.4.23451: UDP, length 320

IP 172.16.233.116.41319 > 224.2.3.4.23451: UDP, length 320

IP 172.16.233.117.48725 > 224.2.3.4.23451: UDP, length 496