Intel NUCs with ESXi are being used as home servers and in many home labs. If you are generally interested in running ESXi on Intel NUCs, read this post first. One major drawback is that they only have a single network port. There are USB NICs in the market, but with ESXi hosts, only work in path-through mode. That means that USB NICs can only be used inside VMs and not for the hypervisor itself as vmnic.

Intel NUCs with ESXi are being used as home servers and in many home labs. If you are generally interested in running ESXi on Intel NUCs, read this post first. One major drawback is that they only have a single network port. There are USB NICs in the market, but with ESXi hosts, only work in path-through mode. That means that USB NICs can only be used inside VMs and not for the hypervisor itself as vmnic.

The slightly older 4th Gen NUCs had a Mini PCIe slot that allowed an additional NIC to be installed. With that port, it was possible to install a Syba Mini PCIe NIC for example. Nevertheless, the adapter is unsupported with ESXi and did not fit into the NUC chassis, there are solutions.

Unfortunately, the 5th Gen NUC no longer has a Mini PCIe slot. Instead, it has M.2 slots. An easy solution would be a M.2 NIC, but until today there are no such cards available. In this post, I will explain the possibilities of using PCIe cards with the M.2 slot to upgrade the 5th Gen NUC with additional NICs or other cards like Fibre Channel HBAs.

More Information about the 5th Gen NUC M.2 Slot

M.2 is also known as "Next Generation Form Factor" (NGFF). It is a specification for internally mounted computer expansion cards. It is the successor to the mSATA standard, which uses the PCIe Mini Card physical card layout and connectors. M.2 is a very flexible standard that allows different module sizes and various interfaces. As M.2 cards are available in many possible variations, they are divided into different Form Factors and Keys.

Form Factors - M.2 devices are denoted using a WWLL naming scheme, where "WW" specifies the module width and "LL" specifies the module length. You can find notations like "M.2 2280 Module" in the NUC documentation.

Keys - M.2 modules provide a 75-pin connector. Depending on the type of module, certain pin positions are removed to present one or more keying notches. Host-side M.2 connectors may populate one or more mating key positions, determining the type of modules accepted by the host. There are 12 different keys specified (Key A-M).

Not all 5th Gen Intel NUCs have the same M.2 slot but the slot I am mainly talking about in this post is available on all NUCs. It's the slot where you add the M.2 SSD. Only one NUC, the NUC5i5MYHE provides a second M.2 slot (Which provides a different key). Instead of the second M.2 slot the other NUCs have a pre-soldered WiFi module.

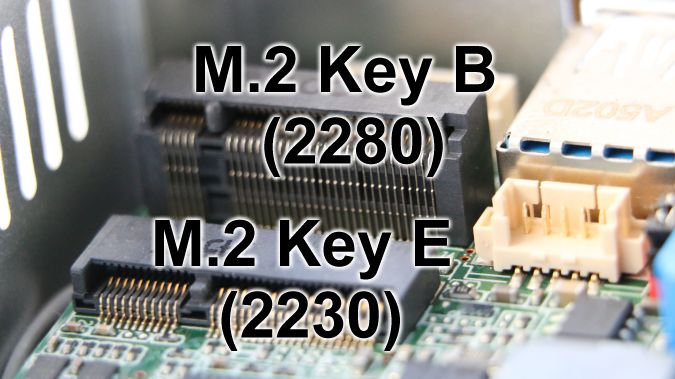

There are two M.2 Slots available in 5th Gen NUCs:

- M.2 Key B (2280) - All 5th Gen NUCs

- M.2 Key E (2230) - Only NUC5i5MYHE

The Key E slot is used for a WiFi adapter. The Key B ports are typically used for an M.2 SSD. But according to the specification, they should theoretically provide the following interfaces:

- Key B: PCIe ×2, SATA, USB, Audio, UIM, HSIC, SSIC, I2C and SMBus

- Key E: PCIe ×2, USB, I2C, SDIO, UART and PCM

M.2 to PCIe Adapter

While knowing that PCIe is compatible with M.2, the only thing you need is an adapter, right? Simple, but these kind of adapters appear to be very uncommon. I could only find these two adapters from a company called Bplus:

The P14S-P14FP adapter has a Key B interface which is available on all 5th gen NUCs. It converts the M.2 slot to a PCIe X4 slot where you can insert your PCIe network adapter.

The P15S-P15F adapter has a Key E interface which is the second M.2 slot on the NUC5i5MYHE. It converts the M.2 slot to a Mini PCIe slot. (As a side note, I didn't manage to get the P15S-P15F to work in my NUC but I'm currently trying to find out why.)

M.2 and PCIe Voltage Issue

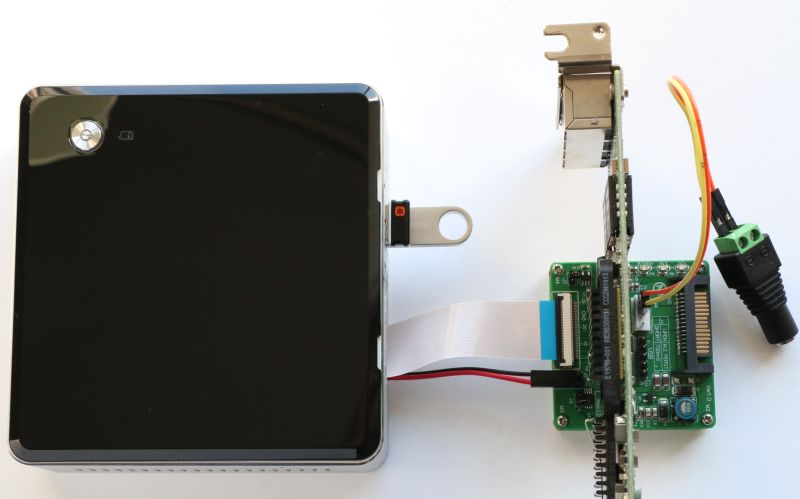

My first attempt to install the P14S-P14FP adapter was unsuccessful. The problem is that M.2 only provides 3.3V and PCIe Cards require both, 3.3V and 12V. To solve that issue, the P14FB provides a 4-pin FDD power jack and a 15-pin SATA power jack. Unfortunately, the NUC neither has one of those connectors, nor it has any internal 12V headers. The only solution is to use an external power adapter.

Any 12V power adapter should fit. I am using one from a portable hard drive. I connected it to the 4-pin FDD power jack by using a "5.5 mm Female DC Power Connector" and a "2 Pin Male to Female Jumper Wire".

Any 12V power adapter should fit. I am using one from a portable hard drive. I connected it to the 4-pin FDD power jack by using a "5.5 mm Female DC Power Connector" and a "2 Pin Male to Female Jumper Wire".

Make sure that you know the polarity. It is common, that + is on the inside, but some adapters might differ. The power adapter polarity should be written on the label. In doubt, use a multimeter.

P14S-P14FP Installation

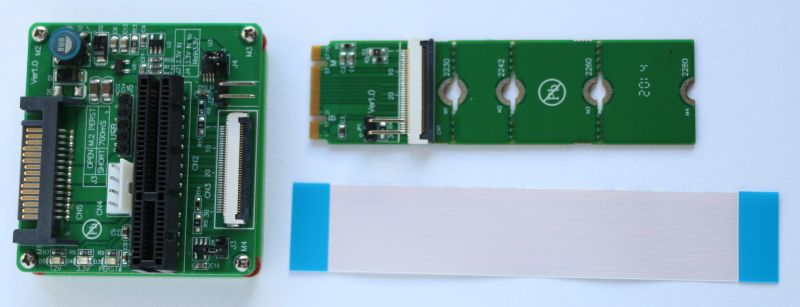

The adapter (or Extender Board) consists of 2 PCBs that are connected with a flexible flat cable (FFC). You should be aware that it comes without any cases, so you probably have to build your own. Without further modification, the setup is very fragile and looks like this:

Required Components

- Intel NUC e.g. NUC5i5MYHE (Must have an M.2 Key B Slot)

- M.2 to PCIe X2 Edge Extender Board (P14S-P14FP)

- PCIe X2 Card e.g. Intel CT NIC EXPI9301CTBLK

- 12V Power Adapter

- 12V Power Adapter (I am using one from an external hard drive)

- 5.5 mm Female DC Power Jack

- Male to Female Jumper Wire

Of course, this is in no way a supported configuration. It's only for engineering purposes. I can't guarantee that it will work, or that it won't break your components.

- Install the P14S into the NUCs Key B M.2 Slot. It's a 2280 card that fits perfectly into the NUC.

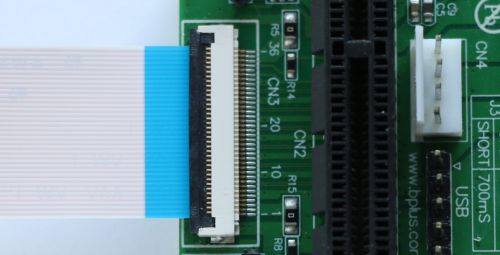

- Carefully lift the black bracket to an angle of 90 degrees, slide the FCC into the connector (blue side upwards), and close the bracket. The bracket should slightly squeeze the cable, but it should not bend.

- Get the cable out of the NUC. If you have the NUC5i5MYHE, you can remove the serial port bezel and put the cable through.

- Connect the other side of the FCC to the P14FB PCB.

- Connect the Dupont 2PIN Cable to both PCBs. Make sure to connect the red wire to the marked pin on both sides.

- Connect an external 12V power adapter to the 4pin FDD connector

- Insert a PCIe card into the P14FB

- Plug in the 12V adapter (You should see a green light indicating 12V power)

- Power on the NUC

Always plug in the 12V adapter first.

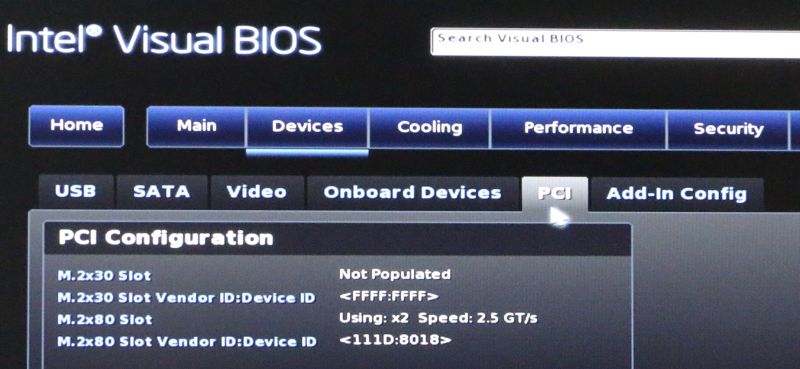

For the first test, I am using a network adapter with an Intel 82576 chipset. This is fully supported with ESXi so I do not have any driver issues. If your ESXi does not detect the card you should verify that the NUC has detected it in the BIOS (Devices > PCI). If the card as been detected, you probably have a driver issue in your ESXi.

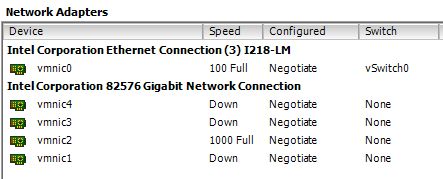

My NUC currently runs with VMware ESXi 6.0.0 build-2494585 (Setup Howto). The card has been detected without any further modification.

lspci output:

[root@esx6:~] lspci |grep vmnic 0000:00:19.0 Network controller: Intel Corporation Ethernet Connection (3) I218-LM [vmnic0] 0000:04:00.0 Network controller: Intel Corporation 82576 Gigabit Network Connection [vmnic1] 0000:04:00.1 Network controller: Intel Corporation 82576 Gigabit Network Connection [vmnic2] 0000:05:00.0 Network controller: Intel Corporation 82576 Gigabit Network Connection [vmnic3] 0000:05:00.1 Network controller: Intel Corporation 82576 Gigabit Network Connection [vmnic4]

esxcli network nic list output:

[root@esx6:~] esxcli network nic list Name PCI Device Driver Admin Status Link Status Speed Duplex MAC Address MTU Description ------ ------------ ------ ------------ ----------- ----- ------ ----------------- ---- -------------------------------------------------- vmnic0 0000:00:19.0 e1000e Up Up 100 Full b8:ae:ed:75:08:68 1500 Intel Corporation Ethernet Connection (3) I218-LM vmnic1 0000:04:00.0 igb Up Down 0 Half 00:1b:21:93:b3:b0 1500 Intel Corporation 82576 Gigabit Network Connection vmnic2 0000:04:00.1 igb Up Up 1000 Full 00:1b:21:93:b3:b1 1500 Intel Corporation 82576 Gigabit Network Connection vmnic3 0000:05:00.0 igb Up Down 0 Half 00:1b:21:93:b3:b2 1500 Intel Corporation 82576 Gigabit Network Connection vmnic4 0000:05:00.1 igb Up Down 0 Half 00:1b:21:93:b3:b3 1500 Intel Corporation 82576 Gigabit Network Connection

vSphere Client Network Adapters:

Performance [Update: September 29, 2015)

Some words about the performance. The NUCs M.2 slot is based on PCI Express (PCIe) Revision Gen2 and has 2 lanes (X2). Each lane supports a data transfer speed of 5.0 GT/s (Giga transfers per second). To get the actual usable bandwidth, you have to take into account that PCIe Gen 2 uses an 8b10b encoding which means that it requires 10 bits, to transfer 1 byte (8 bits).

5.0 GT/s * 2 (lanes) * 8/10 (encoding) = 8Gbit/s = 1000MB/s

=

The maximum bandwidth of the 5th Gen NUCs M.2 slot is 1000MB/s

According to the P14S-P14FP Extender Board documentation, it supports "PCI Express base Specification 1.1 (Up to 2.5Gpbs)". I'm not sure where the "2.5Gpbs" comes from, maybe it's a mistake. PCIe Gen 1.1 supports 2.5 GT/s per lane and the card supports two lanes. (It's an X4 slot because X2 slots does not exist)

2.5 GT/s * 2 (lanes) * 8/10 (encoding) = 4Gbit/s = 500MB/s

=

The maximum bandwidth of the P14S-P14FP adapter is 500MB/s

Very cool write up. Just an FYI, two models of 5th gen NUC (NUC5PPYH, NUC5CPYH) were released later than the initial models (5i3,5i5,5i7s), and don't have any free M.2 out of the box. They do have the M.2 Key E (2230) only, and its pre-populated with a Wireless-AC 3165 Intel module.

Really interesting read, even for someone without a NUC, just a homelab obsession! Good thinking outside of the box...get it!? Ehehehe...

Such a fun read, wow, how innovative/creative/bold, pushing the boundaries of what can be accomplished. at obtainable prices.

Updated the post with some performance information.

Very interesting post!

I'm currently building a NAS using an intel NUC, and I have ordered a P15S-P15F to house a mini-PCIe two port SATA controller.

In theory this should work, as all the adapter does is bridge the M.2 PCIe interface to a mini-PCIe slot.

Did you manage to get it to work?

The adapter does not work for me. It does neither work with a mPCIe SSD nor with the Syba gigabit Adapter. As far as I know there are incompatibilites in M.2 keys despite they fit physically. They "can" provide the interfaces defined in the standard, but they are not bound to it.

Let me know if you get it to work ;-)

Has anyone come up with a housing?

While searching the tubes I found an adapter from m.2 to NIC. They have both 1 and 2 ports.

http://www.innodisk.com/Product/ProductDetail.aspx?SUQwMT03MGY1OWE1Ny1iMDlkLTQ2NmMtOGYyYi1jMDFkYjA5YjEyZTMmSUQwMj1hZTA5Yzc0OC1jN2JhLTRiZjMtOTJiYy01ZDBjZTU0YzE2ODgmSUQwMz04N2YwZTI1Yy0yNGJiLTQ2NTgtYmYxMS01MTM1NTM2YWQ2NjMmSUQ9ZjY1NGEyYzItNjU4My00ZjcxLWJmMTYtYWEyYjFhY2ZkN2EyJmRmbF9JRD0wMDE%3d

If the link doesnt work, go to innodisk.com -> Communication module ->EGUL-G201

I'm thinking of buying the new 6th gen nuc and install this for using pfsense :) maybe it will work..

Hi Martin did you follow up with your order? is it working?

Hi I spoke with Eddie of Innodisk and he told me that these card would not fit in the Nuc's in February a new M2 interface base in the PCIE standards for M2 will be available. I am trying to get my hands on a pair of them for testing and see if they would work. If I can pull it out I will do a review here.

So crossing fingers.

STeph

Hello everyone. Here's my progress update:

Both the P15S-P15F adapter and the SATA controller have arrived so I was finally able to do some testing.

Despite the power LED on the adapter being lit, the SATA controller does NOT work.

I have then attempted to use a combo adapter which uses USB for bluetooth and PCIe for wi-fi. Interestingly, only the USB bluetooth adapter is detected and not the wifi adapter that is using the PCIe interface.

Upon closer inspection of the specification of the m.2 wifi adapter that came with the unit I discovered that it was in fact using a PCIe lane.

To sum things up - I know for fact that there is a functional PCIe lane in the M.2 port. The adapter fits. Power and USB work, PCIe doesn't. Intel refuse to provide support and claim that the product was not intended to be used in this configuration.

My conclusion is that Intel are simply using a PCIe whitelist. Otherwise, why would one PCIe device work and another wouldn't? Unfortunately, the practical meaning of this is that only M.2 devices that were approved by Intel may be used at this stage. Very bad news.

My solution at this stage is to use a USB 3.0 to SATA adapter. This is by no means ideal, but it works :)

Martin - very interesting discovery. Please let us know if you can find a reseller.

Manufacturer is not obligated to implement the PCIe, and has most likely only connected USB to that port.

In stead of all this bother why not just use VLANs over the single ethernet port? Makes sense to me

Performance and redundancy.

In terms of a home network your 1GB port will suffice so performance won't be an issue but redundancy I can see your point

When virtual machines are stored on local storage 1Gb/s is enough but I also share the port with NFS traffic as some VMs are stored on an external storage.

Hello folks!

Any updates on this? Anyone found a solid solution yet?

Cheers.

Looks like two options are avilable but as of now it's not working for other persons.

1. EGUL-G201 from innodisk.com

2. M.2 to PCIe X2 Edge Extender Board (P14S-P14FP)

The only other option I can see would be to use usb3 Ethernet ports. Put them in pass through mode.

I believe the point of this post is to add NICs to the NUC to be used at the hypervisor level. Passthrough is great for a single VM.

Hypervisor will see it as a NIC without passthrough if it supports the chipset on the usb3 Ethernet.

Have you had any luck finding or compiling usb3 NICs drivers for esxi 6?

To be honest, the theory is correct but I haven't looked into it yet as most on eBay don't list the chipset.

Searching the net, I found this press-release:

http://g2digital.co.uk/development-project-m-2-ethernet-card-for-intel-nuc/

Hopefully someone here could test this card once it's out...

Dueltek Distribution Presented: The 100Base Multi-Mode SC Fibre-to-PCI coupler (type TE100-PCIFC) supplies dependable efficiency network from a single desktop pc or server to a Multi-Mode SC-type fibre connector for ranges of approximately two kilometers (1.2 mls). Full-duplex type provides data move speeds of approximately 200Mbps.

I add the opportunities to test a new dual M.2 Ethernet card from Innodisk.com and so far its a perfect match. It gives out 2 new extra Ethernet ports to the Nuc without any extra external psu or boards. And the best is that its using an intel Chip set so there is no drivers to install in ESX.

So far its the best solution I found. I also had some insight from Eddie Chiu from Innodisk that they are working on a Nuc specific solution that will have all the connector and fitting for the Intel Nuc system. So be patient some interesting stuff is coming.

I will also post a full review of the current solution I tested.

(www.innodisk.com)

Part number :EGPL-G201 for the M.2 2280 module

:EGUL-G201 for the M.2 2230 module

Stephane.

Hi Stephane, look forward to your review with innodisk NIC and ESX. I am waiting the same solution.

Innodisk (and the distributors) dont sell to private persons at the moment ... and it seems they wouldn't ...

M.2 Fiber NIC 1G was showcased at DellEMC World in 2016..https://twitter.com/DellTPP/status/788452852410626048

Actually, there's a 12V header. It's connector#4 of

https://www.intel.com/content/dam/www/public/us/en/documents/product-briefs/nuc-kit-nuc5i5myhe-board-nuc5i5mybe-brief.pdf

Wouldn't this adapter from AliExpress work? A lot cheaper.

"standard PCI-e 1x/4x card in M.2 NGFF M key slot in the Desktop or Laptop.

Transparent to the operating system and does not require any software drivers.

M.2 Specification Revision 0.9-3

Serial ATA Spec. Reversion 3.2

Support 2260/2280 type M.2 SSD module slot.

Only Support M.2 Socket 3 PCI-e-based slot."

https://www.aliexpress.com/store/product/Free-shipping-NGFF-to-PCI-e-4x-Slot-Riser-Card-M-key-M-2-SSD-Port/710969_32230451080.html

Yes (But I don't know if this adapter works). I didn't find other adapters when I created this article.

However, I prefer the USB solution for networking.

No. If you look at the picture carefully, the notch is on the right hand side (M key only), and won't fit in a B-key slot.