When troubleshooting network problems on ESXi hosts you want to specify the outgoing VMkernel adapter. As explained here you can ping from a specific VMkernel adapter with the -I parameter. In vSphere 6.0, or with VXLAN activated, this might not work as expected and displays the following error.

[root@esx:~] ping -I vmk1 10.1.1.1

Unknown interface 'vmk1': Invalid argument

The problem is related to the multiple TCP/IP Stack features introduced in vSphere 6.0. To ping from specific VMkernel adapters that are not in the default Stack (defaultTcpipStack) you have to manually specify the NetStack with the -S parameter.

Ping from a vmkernel Interface that is not part of the defaultTcpipStack default stack fails with the following error message:

[root@esx:~] ping -I vmk1 10.1.1.1 Unknown interface 'vmk1': Invalid argument

Additionally it's not even possible to ping local interfaces:

[root@esx5:~] esxcli network ip interface ipv4 get Name IPv4 Address IPv4 Netmask IPv4 Broadcast Address Type DHCP DNS ---- -------------- ------------- --------------- ------------ -------- vmk0 192.168.222.25 255.255.255.0 192.168.222.255 STATIC false vmk1 10.1.1.5 255.255.255.0 10.1.1.255 STATIC false vmk2 192.168.222.56 255.255.255.0 192.168.222.255 STATIC false [root@esx5:~] ping 10.1.1.5 PING 10.1.1.5 (10.1.1.5): 56 data bytes --- 10.1.1.5 ping statistics --- 4 packets transmitted, 0 packets received, 100% packet loss

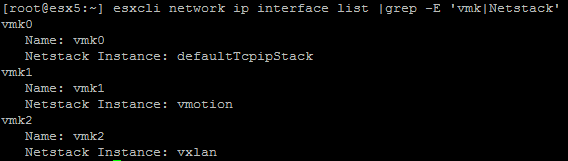

vmk1 is the interface I use for vMotion, so it's not part of the defaultTcpipStack. You can identify the TCP/IP Stack with the following commands:

- esxcfg-vmknic -l (NetStack Column)

- esxcli network ip interface list (Netstack Instance)

- esxcli network ip interface list |grep -E 'vmk|Netstack' (Filtered Version)

After identifying that vmk1 uses the TCP/IP Stack named vmotion you can successfully ping by using the correct NetStack with the -S [NetStack] parameter.

[root@esx5:~] ping -I vmk1 -S vmotion 10.1.1.1 PING 10.1.1.1 (10.1.1.1): 56 data bytes 64 bytes from 10.1.1.1: icmp_seq=0 ttl=64 time=0.299 ms 64 bytes from 10.1.1.1: icmp_seq=1 ttl=64 time=0.328 ms 64 bytes from 10.1.1.1: icmp_seq=2 ttl=64 time=0.653 ms --- 10.1.1.1 ping statistics --- 3 packets transmitted, 3 packets received, 0% packet loss round-trip min/avg/max = 0.299/0.427/0.653 ms

Local pings in the correct NetStack are also possible:

[root@esx5:~] ping -S vmotion 10.1.1.5 PING 10.1.1.5 (10.1.1.5): 56 data bytes 64 bytes from 10.1.1.5: icmp_seq=0 ttl=64 time=0.538 ms 64 bytes from 10.1.1.5: icmp_seq=1 ttl=64 time=0.041 ms 64 bytes from 10.1.1.5: icmp_seq=2 ttl=64 time=0.034 ms --- 10.1.1.5 ping statistics --- 3 packets transmitted, 3 packets received, 0% packet loss round-trip min/avg/max = 0.034/0.204/0.538 ms

THANK YOU!!!!

Thanks for sharing... I was guessing that something in ESXi 6.x changed because I had done this dozens of times in ESXi 5.x and even 4.x.

Cheers!

Awesome, thanks for posting this!

To use vmkping with vmotion netstack, you can do this:

vmkping ++netstack=vmotion -I vmk3 192.168.2.16

(Thanks to http://www.enterprisedaddy.com/2016/10/vmotion-tcpip-stack-layer-3-vmotion/)

make sure you have assign the Vmontion Ip Gateway in TCP/IP Stack.

Go to host --> Configuration--> Networking--> TCP/IP Configuration --> TCP/IP Stack