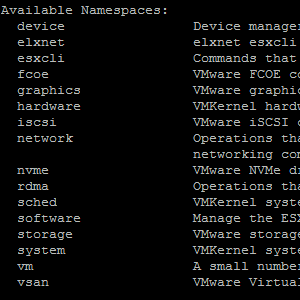

In vSphere 6.5 the command line interface esxcli has been extended with new features. This post introduces the new and extended namespaces. Remarkable changes in esxcli version 6.5 are:

In vSphere 6.5 the command line interface esxcli has been extended with new features. This post introduces the new and extended namespaces. Remarkable changes in esxcli version 6.5 are:

- USB passthrough configuration

- NVMe device status and configuration

- VIB signature verification

- Storage adapter capabilities

- Device capacity information

- VMFS6 reclaim configuration

- vSAN iSCSI configuration

- Physical nic coalesce queue configuration

- WBEM configuration

esxcli device driver list

Displays storage and network devices with their current driver. Device drivers can also be identified with "#esxcli network nic list" or "#esxcli storage core adapter list", but the new command displays all devices at a glance.

# esxcli device driver list Device Driver Status KB Article ------- ------ ------ ---------- vmhba0 nvme normal vmnic0 ne1000 normal vmhba32 vmkusb normal

esxcli graphics

The graphics namespace has been extended by 4 commands that allow to set and display host graphics properties. It also has a command to view graphics device statistics.

- esxcli graphics device stats list

- esxcli graphics host get

- esxcli graphics host refresh

- esxcli graphics host set

# esxcli graphics device stats list

0000:00:02.0

Vendor Name: Intel Corporation

Device Name: Iris Pro Graphics 580

Driver Version: None

Utilization (%): 0

Memory Used (%): 0

Temperature: 0 degrees Celsius

# esxcli graphics host get

Default Graphics Type: Shared

Shared Passthru Assignment Policy: Performance

# esxcli graphics host set --help

Usage: esxcli graphics host set [cmd options]

Description:

set Set host graphics properties.

Cmd options:

--default-type= Host default graphics type.

--shared-passthru-assignment-policy=

Shared passthru assignment policy.

esxcli hardware usb passthrough

Allows USB devices to be enabled for passthrough. The command requires device information in the format "Bus:Dev:vendorId:productId", as shown by the list command. To connect devices to a VM, it is required that usbarbitrator is running.

- esxcli hardware usb passthrough device list

- esxcli hardware usb passthrough device enable

- esxcli hardware usb passthrough device disable

# esxcli hardware usb passthrough device list Bus Dev VendorId ProductId Enabled Can Connect to VM Name --- --- -------- --------- ------- ----------------- ---------------------------------------------------------------- 2 2 b95 1790 false yes ASIX Electronics Corp. AX88179 Gigabit Ethernet 2 3 b95 1790 false yes ASIX Electronics Corp. AX88179 Gigabit Ethernet 1 2 930 6545 false yes Toshiba Corp. Kingston DataTraveler 102 Flash Drive / HEMA Flash 1 3 8087 a2b true yes Intel Corp. # esxcli hardware usb passthrough device enable -d 2:2:b95:1790

esxcli nvme

A new namespace has been introduced to get information and manage NVMe devices.

- esxcli nvme device feature [aec|ar|er|ic|ivc|nq|pm|tt|vwc|wa] [set|get]

- esxcli nvme device firmware ctivate

- esxcli nvme device firmware download

- esxcli nvme device get

- esxcli nvme device list

- esxcli nvme device log [error|fwslot|smart] get

- esxcli nvme device namespace [format|get|list]

- esxcli nvme driver loglevel set

# esxcli nvme device list HBA Name Status Signature -------- ------ --------------------- vmhba0 Online nvmeMgmt-nvme00610000 # esxcli nvme device get -A vmhba0 Controller Identify Info: PCIVID: 144d PCISSVID: 144d Serial Number: S2GLNCAH104752J Model Number: Samsung SSD 950 PRO 256GB Firmware Revision: 1B0QBXX7 Recommended Arbitration Burst: 2 IEEE OUI Identifier: 002538 Controller Associated with an SR-IOV Virtual Function: false Controller Associated with a PCI Function: true NVM Subsystem May Contain Two or More Controllers: false NVM Subsystem Contains Only One Controller: true NVM Subsystem May Contain Two or More PCIe Ports: false NVM Subsystem Contains Only One PCIe Port: true Max Data Transfer Size: 5 Controller ID: 1 Version: 0.0 RTD3 Resume Latency: 0 us RTD3 Entry Latency: 0 us Optional Namespace Attribute Changed Event Support: false Namespace Management and Attachment Support: false Firmware Activate and Download Support: true Format NVM Support: true Security Send and Receive Support: true Abort Command Limit: 7 Async Event Request Limit: 3 Firmware Activate Without Reset Support: false Firmware Slot Number: 3 The First Slot Is Read-only: false Command Effects Log Page Support: false SMART/Health Information Log Page per Namespace Support: true Error Log Page Entries: 63 Number of Power States Support: 4 Format of Admin Vendor Specific Commands Is Same: true Format of Admin Vendor Specific Commands Is Vendor Specific: false Autonomous Power State Transitions Support: true Warning Composite Temperature Threshold: 0 Critical Composite Temperature Threshold: 0 Max Time for Firmware Activation: 0 * 100ms Host Memory Buffer Preferred Size: 0 * 4KB Host Memory Buffer Min Size: 0 * 4KB Total NVM Capacity: 0x0 Unallocated NVM Capacity: 0x0 Access Size: 0 * 512B Total Size: 0 * 128KB Authentication Method: 0 Number of RPMB Units: 0 Max Submission Queue Entry Size: 64 Bytes Required Submission Queue Entry Size: 64 Bytes Max Completion Queue Entry Size: 16 Bytes Required Completion Queue Entry Size: 16 Bytes Number of Namespaces: 1 Reservation Support: false Save/Select Field in Set/Get Feature Support: true Write Zeroes Command Support: true Dataset Management Command Support: true Write Uncorrectable Command Support: true Compare Command Support: true Fused Operation Support: false Cryptographic Erase as Part of Secure Erase Support: true Cryptographic Erase and User Data Erase to All Namespaces: false Cryptographic Erase and User Data Erase to One Particular Namespace: true Format Operation to All Namespaces: false Format Opertaion to One Particular Namespace: true Volatile Write Cache Is Present: true Atomic Write Unit Normal: 255 Logical Blocks Atomic Write Unit Power Fail: 0 Logical Blocks Format of All NVM Vendor Specific Commands Is Same: true Format of All NVM Vendor Specific Commands Is Vendor Specific: false Atomic Compare and Write Unit: 0 SGL Length Able to Larger than Data Amount: false SGL Length Shall Be Equal to Data Amount: true Byte Aligned Contiguous Physical Buffer of Metadata Support: false SGL Bit Bucket Descriptor Support: false SGL for NVM Command Set Support: false

esxcli software vib signature verify

Verifies the signatures of all installed VIB packages and displays the name, version, vendor, acceptance level and the result of signature verification for each of them.

esxcli storage core adapter capabilities list

List the capabilities of the SCSI HBAs in the system.

# esxcli storage core adapter capabilities list vmhba0 Handles Second-Level-LUNs: false Supports Data Integrity: false Provides Own Completion Worlds: true Handles Sense Data in Good and Error Conditions: false vmhba64 Handles Second-Level-LUNs: true Supports Data Integrity: false Provides Own Completion Worlds: true Handles Sense Data in Good and Error Conditions: true

esxcli storage core device capacity list

List capacity information for the known storage devices. The list includes Physical Blocksize,Logical Blocksize, Logical Block Count, Size and Format Type.

# esxcli storage core device capacity list Device Physical Blocksize Logical Blocksize Logical Block Count Size Format Type ------------------------------------- ------------------ ----------------- ------------------- ---------- ----------- naa.6589cfc00000001917172ff67b90be59 131072 512 104857600 51200 MiB Unknown naa.6589cfc0000000572b71f35019e9c31f 131072 512 838860800 409600 MiB Unknown naa.6589cfc000000ed999506ae18cee259a 131072 512 1073741824 524288 MiB Unknown naa.6589cfc000000c104ad79fde586c49a9 131072 512 838860800 409600 MiB Unknown mpx.vmhba32:C0:T0:L0 512 512 30489408 14887 MiB 512n t10.NVMe____Samsung_SSD_950_PRO_256GB 4096 512 500118192 244198 MiB 512e naa.6589cfc000000656da382d1be31512d2 131072 512 1073741824 524288 MiB Unknown

esxcli storage core device latencythreshold

List or and latency sensitive threshold for storage devices.

# esxcli storage core device latencythreshold list

Device Latency Sensitive Threshold

------------------------------------- ---------------------------

naa.6589cfc00000001917172ff67b90be59 0 milliseconds

naa.6589cfc0000000572b71f35019e9c31f 0 milliseconds

naa.6589cfc000000ed999506ae18cee259a 0 milliseconds

naa.6589cfc000000c104ad79fde586c49a9 0 milliseconds

mpx.vmhba32:C0:T0:L0 0 milliseconds

t10.NVMe____Samsung_SSD_950_PRO_256GB 0 milliseconds

naa.6589cfc000000656da382d1be31512d2 0 milliseconds

esxcli storage core device latencythreshold set --help

Usage: esxcli storage core device latencythreshold set [cmd options]

Description:

set Set device's latency sensitive threshold (in milliseconds).

If IO latency exceeds the threshold, new IOs will use default IO scheduler.

Cmd options:

-d|--device=<str> Select the device to set its latency

sensitive threshold. (required)

--latency-sensitive-threshold=<long> Set device's latency sensitive threshold

(in milliseconds). (required)esxcli storage vmfs reclaim config

The new filesystem VMFS6, introduced in vSphere 6.5 supports automatic space reclamation, which can be configured with this command. Configuration parameters are granularity and priotiry.

# esxcli storage vmfs reclaim config get -l Datastore

Reclaim Granularity: 1048576 Bytes

Reclaim Priority: low

# esxcli storage vmfs reclaim config set --help

Usage: esxcli storage vmfs reclaim config set [cmd options]

Description:

set Set space reclamation configuration parameters

Cmd options:

-g|--reclaim-granularity=<long>

Minimum granularity of automatic space reclamation in bytes

-p|--reclaim-priority=<str>

Priority of automatic space reclamation. Supported options are [none, low, medium, high].

-l|--volume-label=<str>

The label of the target VMFS volume.

-u|--volume-uuid=<str>

The uuid of the target VMFS volume.

esxcli system stats installtime get

Display the date and time when this system has been installed. The value will not change on subsequent updates, but if you update older versions of ESXi to 6.5, the command displays the ESXi 6.5 install time.

# esxcli system stats installtime get 2016-11-15T13:08:42

esxcli system wbem

Display and configure the WBEM Agent.

- esxcli system wbem get

- esxcli system wbem set

- esxcli system wbem provider list

- esxcli system wbem provider set

esxcli vsan iscsi

vSAN 6.5 extends workload support to physical or virtual servers with an iSCSI target service. iSCSI targets on vSAN are managed the same as other objects with Storage Policy Based Management (SPBM). The new vSAN iSCSI namespaces allows initiator and target configuration.

- esxcli vsan iscsi defaultconfig

- esxcli vsan iscsi homeobject [create|delete]

- esxcli vsan iscsi homeobject [get|set]

- esxcli vsan iscsi initiatorgroup [add|get]

- esxcli vsan iscsi initiatorgroup initiator [add|remove]

- esxcli vsan iscsi initiatorgroup [list|remove]

- esxcli vsan iscsi status [get|set]

- esxcli vsan iscsi target [add|get|list]

- esxcli vsan iscsi target [lun add|get|list|remove|set]

- esxcli vsan iscsi target [remove|set]

esxcli network nic coalesce/queue

The network namespace has been extended with coalesce and queue parameters that can be configured on a physical NIC.

- esxcli network nic coalesce get

- esxcli network nic coalesce high get

- esxcli network nic coalesce high set

- esxcli network nic coalesce low get

- esxcli network nic coalesce low set

- esxcli network nic queue count get

- esxcli network nic queue count set

- esxcli network nic queue filterclass list

- esxcli network nic queue loadbalancer list

- esxcli network nic queue loadbalancer set

# esxcli network nic coalesce get

NIC RX microseconds RX maximum frames TX microseconds TX Maximum frames Adaptive RX Adaptive TX Sample interval seconds

------ --------------- ----------------- --------------- ----------------- ----------- ----------- -----------------------

vmnic0 N/A N/A N/A N/A N/A N/A N/A

vusb0 N/A N/A N/A N/A N/A N/A N/A

vusb1 N/A N/A N/A N/A N/A N/A N/A

# esxcli network nic coalesce high set --help

Usage: esxcli network nic coalesce high set [cmd options]

Description:

set Set parameters to control the behavior of a NIC when it sends or receives packets at high packet rate.

Cmd options:

-p|--pkt-rate= The high packet rate measured in number of packets per second. When packet rate is above this parameter, the RX/TX coalescing parameters

configured by this command are used.

-R|--rx-max-frames=

The maximum number of RX packets to delay an RX interrupt after they arrive under high packet rate conditions.

-r|--rx-usecs= The number of microseconds to delay an RX interrupt after a packet arrives under high packet rate conditions.

-T|--tx-max-frames=

The maximum number of TX packets to delay an TX interrupt after they are sent under high packet rate conditions.

-t|--tx-usecs= The number of microseconds to delay a TX interrupt after a packet is sent under high packet rate conditions.

-n|--vmnic= Name of the vmnic for which parameters should be set. (required)

# esxcli network nic queue count get

NIC Tx netqueue count Rx netqueue count

------ ----------------- -----------------

vmnic0 1 1

vusb0 0 0

vusb1 0 0

Note that WBEM (Web Based Enterprise Management) services sfcbd and openwsmand are now managed by 'esxcli system wbem set' and for stock newly installed systems these services are now off by default. If 3rd party cim provider vibs are installed WBEM services will autostart.