Intel's Raptor Lake-based 13th Gen "Arena Canyon" NUC Professional series is now available to purchase. This article takes a deeper look at their capabilities to run a Virtualization lab base on VMware ESXi Hypervisor. While VMware does not officially support NUCs, they are very common in home labs and test environments. They are small, silent, transportable, and have very low power consumption, making them a great server for running your cost-aware home lab. While there are many NUC-like clones in the market today, the original Intel NUC is still very popular due to its superior endurance. My first "Pro" NUC, the NUC5i5MYHE is currently 8 years old and still runs without problems.

The 13th Gen Arena Canyon is available with i3, i5, and i7 CPUs. The i5 and i7 versions are also available with vPro Support.

- NUC13ANHv7 / NUC13ANKv7 (Intel Core i7-1370P vPro - 6 x up to 5.20 GHz / 8 x up to 3.90 GHz)

- NUC13ANHv5 / NUC13ANKv5 (Intel Core i5-1350P vPro - 4 x up to 4.70 GHz / 8 x up to 3.50 GHz)

- NUC13ANHi7 / NUC13ANKi7 (Intel Core i7-1360P - 4 x up to 5.00 GHz / 8 x up to 3.70 GHz)

- NUC13ANHi5 / NUC13ANKi5 (Intel Core i5-1340P - 4 x up to 4.60 GHz / 8 x up to 3.40 GHz)

- NUC13ANHi3 / NUC13ANKi3 (Intel Core i3-1315P - 2 x up to 4.50 GHz / 4 x up to 3.30 GHz)

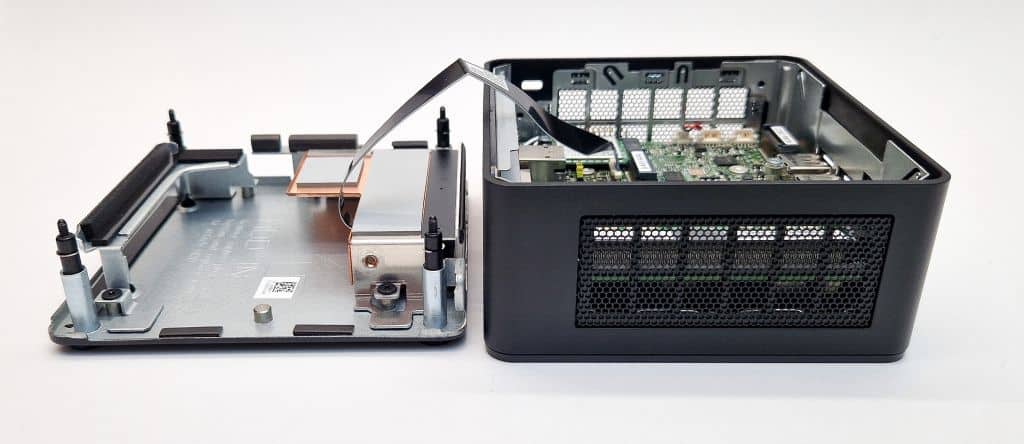

The Arena Canyon is Intel's professional line in their 13th NUC Generation and the successor to the 12th Gen Wall Street Canyon. This system is intended for professional use cases and has significant enhancements for your homelab running ESXi. As known since the 11th Generation, it has an expansion bay that allows you to install a second network adapter.

Features

- 13th Gen Raptor Lake CPU

- Intel vPro Support (v5/v7 Models)

- TPM 2.0 (vPro Only)

- Up to 64GB of DDR4 SO-DIMM memory

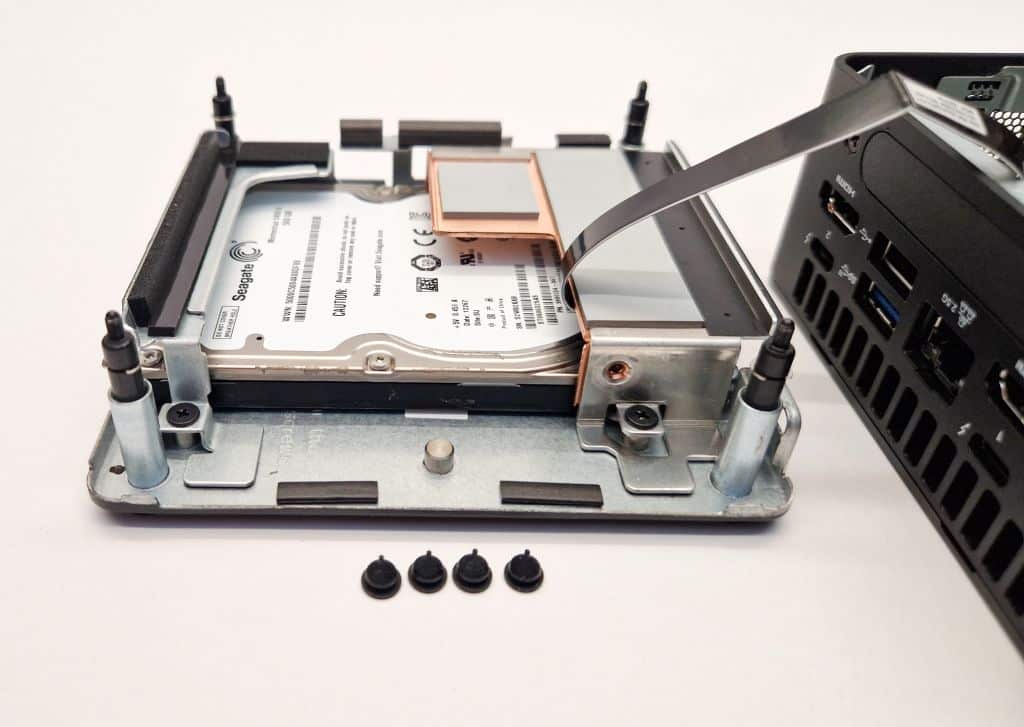

- Available with and without a 2.5″ HDD slot

- M.2 Slot (22x80 PCIe x4 Gen4)

- M.2 Slot (22x42 for SSD or expansion module)

- Intel 2.5 Gigabit Network Adapter (i226-LM)

- Expansion bay with second 2.5 GbE NIC option

- Two Thunderbolt ports (incl. USB 4.0)

- Three USB 3.2 Gen2 ports

- Qualified for 24/7 Operation

- Select SKUs with five-year product availability

- 120W/20VDC power adapter for Core i7, i5

- 90W/19VDC power adapter for Core i3

Comparison with predecessor (Wall Street Canyon)

- Not much news for the 13th Gen. It's just faster.

- Available with 6 (Power)-Core CPU (vPro i7)

- For long-term support, it has select SKUs with five-year product availability.

To get an ESXi Host installed you additionally need:

- Memory (1.2V DDR4-3200 SODIMM)

- M.2 SSD (22×80), 2.5″ HDD or USB-Stick

Model comparison

| Tall Kit (2.5") | NUC13ANHv7 | NUC13ANHv5 | NUC13ANHi7 | NUC13ANHi5 |

| Slim Kit | NUC13ANKv7 | NUC13ANKv5 | NUC13ANKi7 | NUC13ANKi5 |

| Architecture | Raptor Lake (Intel 7) | |||

| CPU | Intel Core i7-1370P vPro |

Intel Core i5-1350P vPro |

Intel Core i7-1360P |

Intel Core i5-1340P |

| Performance Cores | 6x up 5.2 GHz | 4x up to 4.7 GHz | 4x up to 5.0 GHz | 4x up to 4.6 GHz |

| Efficient Cores | 8x up to 3.9 GHz | 8x up to 3.5 GHz | 8x up to 3.7 GHz | 8x up to 3.4 GHz |

| Cores | 14 (20 Threads) | 12 (16 Threads) | 12 (16 Threads) | 12 (16 Threads) |

| TDP | 28 W (Processor Base Power) | |||

| Memory Type | 2x 260-pin 1.2 V DDR4 3200 MHz SO-DIMM | |||

| Max Memory | 64 GB | |||

| Chassis | Length x Width x Height 117 x 112 x 54 (Slim Kit: 37) |

|||

| USB Ports | Front: 2x USB 3.2 Gen2 Back: 2x USB 4 (Type-C), 1x USB 3.2, 1x USB 2.0 Internal Header: 2x USB 2.0, USB 3.2 on M.2 |

|||

| Thunderbolt Ports |

2x Thunderbolt 4 | |||

| Storage | M.2 22x80 (key M) for SATA3 or PCIe x4 Gen4 NVMe OR AHCI SSD M.2 22x42 (key B) for SATA3 or PCIe x1 Gen3 NVMe OR AHCI SSD SATA3 2.5" HDD/SDD (H only) |

|||

| LAN | Intel I226 2.5 Gigabit LAN 2nd Intel 2.5 Gigabit LAN (on expansion module) |

|||

| Intel VT-x | Yes | |||

| Intel vPro | Yes | No | ||

| Available | Q1 2023 | Q1 2023 | Q1 2023 | Q1 2023 |

Model Types

The Arena Canyon NUC is available with 5 different CPUs. Each version is available in different configurations. New in the 13th Generation is that selected models (L5) are going to be available for 5 years, instead of the usual 3 years.

The following table explains the various options:

Example: NUC13ANHv7

| Type | Example | Description |

| NUC Gen | NUC13 | 13th Generation Intel NUC |

| Family | AN | 2-letter NUC Family Code AN - Arena Canyon L3 - 3year available SKU L5 - 5year available SKU |

| Form Factor | H | NUC form factor: B - Board only K - Slim Kit H - Tall Kit (System with 2.5" drive bay) |

| CPU | v7 | Processor type: i3 - Core i3 i5 - Core i5 i7 - Core i7 v5 - Core i5 with vPro v7 - Core i7 with vPro |

| Suffix | E | 0L - 2nd LAN Adapter included 0Z - "Lite" Model (No Thunderbolt) |

HCL and VMware ESXi Support

Intel NUCs are not supported by VMware and are not listed in the HCL. Not supported means that you can't open Service Requests with VMware when you have a problem. It does not state that it won't work.

ESXi 8.0 - ESXi runs out of the box with the original image.

ESXi 7.0 - The Community Networking Driver for ESXi is required.

Currently, there is no driver available for ESXi 6.x

To clarify, the system is not supported by VMware, so do not use this system in a productive environment. I can not guarantee that it will work stable. As a home lab or a small home server, it should be fine.

Network (Intel I226-LM) - "No Network Adapters" Error with ESXi 7.0

The Community Networking Driver for ESXi is required to install ESXi 7.0 on the 13th Gen NUC. See Installation for instructions.

Storage (AHCI and NVMe)

The storage controller works out of the box with ESXi 7.0 and ESXi 8.0.

Tested ESXi Versions

- VMware ESXi 8.0 U1

- VMware ESXi 8.0

- VMware ESXi 7.0 U3 (Custom Image only)

- VMware ESXi 7.0 U2 (Custom Image only)

Installation

When you try to install ESXi 7.0 or 8.0 on a system with a 13th Gen Intel CPU, the installation fails with a purple diagnostics screen:

HW feature incompatibility detected; cannot start

Fatal CPU mismatch on feature "Hyperthreads per core"

Fatal CPU mismatch on feature "Cores per package"

Fatal CPU mismatch on feature "Cores per die"

This problem is caused by the new CPU architecture which includes different types of cores - Performance-cores and Efficient-cores. With vSphere 7.0 Update 2, the kernel parameter cpuUniformityHardCheckPanic has been implemented to address the issue. The option can be configured with the following command:

# esxcli system settings kernel set -s cpuUniformityHardCheckPanic -v FALSE

You also have to enable kernel option ignoreMsrFaults to prevent PSOD during VM startups. (Credit to William Lam for providing a solution for the PSOD Issue)

# esxcli system settings kernel set -s ignoreMsrFaults -v TRUE

Alternatively, to workaround the issue you can also disable E or P Cores completely. See this article for instructions.

If you want to use ESXi 8.0, just download the Image and create a bootable USB flash drive.

If you try to install ESXi 7.0, the installer fails with a "No Network Adapters" error. A driver for the Intel I226-LM 2.5GbE adapter is not bundled in the standard ESXi Image but is available as a community-based VMware Fling.

Download: Community Networking Driver for ESXi

How to create a Custom ESXi Image

This option explains how to create the Custom Image with PowerCLI. The Image can be used to install ESXi from scratch.

- Download the driver Net-Community-Driver_1.2.7.0-1vmw.700.1.0.15843807_19480755.zip (link)

- Copy the driver to your Build Directory (c:\esx) for example

- Open PowerShell

- Run the following commands in your build directory:

# (Optional) Install PowerCLI Module Install-Module -Name VMware.PowerCLI -Scope CurrentUser Add-EsxSoftwareDepot https://hostupdate.vmware.com/software/VUM/PRODUCTION/main/vmw-depot-index.xml Export-ESXImageProfile -ImageProfile "ESXi-7.0.0-15843807-standard" -ExportToBundle -filepath ESXi-7.0.0-15843807-standard.zip Remove-EsxSoftwareDepot https://hostupdate.vmware.com/software/VUM/PRODUCTION/main/vmw-depot-index.xml Add-EsxSoftwareDepot .\ESXi-7.0.0-15843807-standard.zip Add-EsxSoftwareDepot .\Net-Community-Driver_1.2.7.0-1vmw.700.1.0.15843807_19480755.zip New-EsxImageProfile -CloneProfile "ESXi-7.0.0-15843807-standard" -name "ESXi-7.0.0-15843807-NUC" -Vendor "virten.net" Add-EsxSoftwarePackage -ImageProfile "ESXi-7.0.0-15843807-NUC" -SoftwarePackage "net-community" Export-ESXImageProfile -ImageProfile "ESXi-7.0.0-15843807-NUC" -ExportToIso -filepath ESXi-7.0.0-15843807-NUC.iso

- Use the ISO image to install ESXi. The simplest way to install ESXi is by using the ISO and Rufus to create a bootable ESXi Installer USB Flash Drive. No custom BIOS Settings are required.

If you have already installed ESX (with a USB NIC for example) you can install the newer driver with the following command:

# esxcli network firewall ruleset set -e true -r httpClient # cd /tmp/ # wget https://download3.vmware.com/software/vmw-tools/community-network-driver/Net-Community-Driver_1.2.7.0-1vmw.700.1.0.15843807_19480755.zip # esxcli software vib install -d Net-Community-Driver_1.2.7.0-1vmw.700.1.0.15843807_19480755.zip

Performance

The performance of a single NUC is sufficient to run a small home lab including a vCenter Server and 3 virtual ESXi hosts. It's a great system to take along for demonstration purposes. If you have multiple systems and a vCenter Server you can build a powerful cluster.

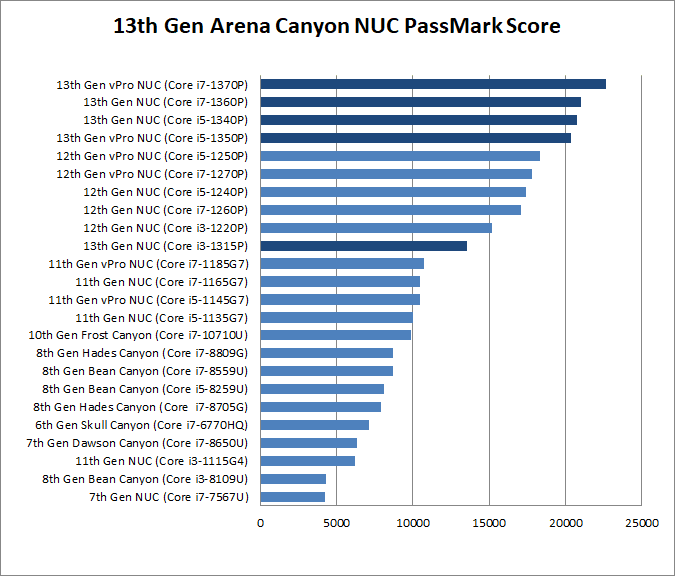

The following chart is a comparison based on the NUCs PassMark score. The performance gap to previous generations is huge, which is a result of going up from 4 to 16 Cores.

Rackmount Kit for 13th Gen NUC

I created Rackmount Kits for various NUC Generations to be able to install the system into a 10" rack. You can find a list of all available designs here. The 13th Gen NUC chassis is identical to the 12th Gen, so it is compatible with the 12th Gen Rackmount.

Features

- Modular and reproducible Design

- Easy to print and sturdy

- NUC is attached to the Rackmount using VESA mounts or Velcro Straps

- Front Access for Ports using Keystone Adapters

- 1.5 HE design that allows mounting 2 NUCs in 3U

Power consumption

NUCs have a very low power consumption. I'm measuring power consumption in different scenarios: Idle (ESXi in Maintenance Mode), Average Load (1 vCenter, 4 Linux VMs), and during a Stress test. The NUC has been configured with 64GB RAM and no HDD or SSD. The power policy was configured to be "Balanced".

- Idle: 10 W

- Average Load: 26 W

- Stress Test: 63 W

The average operating costs are less than 5 Euros per month:

20 watt * 24 h * 30 (days) = 14,4 KWh * 0,30 (EUR) = 4,32EUR

Consumption measured with Homematic HM-ES-PMSw1

Wow nice info :-)

Would it be possible to use it in a 2-node vSAN (OSA) cluster setup?

Runing ESA will become difficult I guess, with the 4 disk requirement? :-)

Yes, just like vSAN 1.0 (OSA), there is possible and supported. This can even work w/1-Node https://williamlam.com/2023/01/how-to-bootstrap-vsan-express-storage-architecture-esa-on-unsupported-hardware.html

For all sorts of homelab/NUC tricks, be sure to checkout https://williamlam.com/home-lab

Thanks for the info, very comprehensive.

I would have a question though. I am currently running ESXi on a gen8 NUC, and WOL is not working after the machine has been shut down from ESXi.

Do you know if that issue is present in this hardware version?

thanks a lot!

Great article!! I have a question can all the cores (p and e and hyper threads) be utilised by vms with the two modifications listed? If so what cores are allocated to a vm? Will it get some from both pools? Or do I have to disable e or p cores in the bios to use vms?