ESXi on 13th Gen Intel NUC Pro (Arena Canyon)

Intel's Raptor Lake-based 13th Gen "Arena Canyon" NUC Professional series is now available to purchase. This article takes a deeper look at their capabilities to run a Virtualization lab base on VMware ESXi Hypervisor. While VMware does not officially support NUCs, they are very common in home labs and test environments. They are small, silent, transportable, and have very low power consumption, making them a great server for running your cost-aware home lab. While there are many NUC-like clones in the market today, the original Intel NUC is still very popular due to its superior endurance. My first "Pro" NUC, the NUC5i5MYHE is currently 8 years old and still runs without problems.

The 13th Gen Arena Canyon is available with i3, i5, and i7 CPUs. The i5 and i7 versions are also available with vPro Support.

- NUC13ANHv7 / NUC13ANKv7 (Intel Core i7-1370P vPro - 6 x up to 5.20 GHz / 8 x up to 3.90 GHz)

- NUC13ANHv5 / NUC13ANKv5 (Intel Core i5-1350P vPro - 4 x up to 4.70 GHz / 8 x up to 3.50 GHz)

- NUC13ANHi7 / NUC13ANKi7 (Intel Core i7-1360P - 4 x up to 5.00 GHz / 8 x up to 3.70 GHz)

- NUC13ANHi5 / NUC13ANKi5 (Intel Core i5-1340P - 4 x up to 4.60 GHz / 8 x up to 3.40 GHz)

- NUC13ANHi3 / NUC13ANKi3 (Intel Core i3-1315P - 2 x up to 4.50 GHz / 4 x up to 3.30 GHz)

The Arena Canyon is Intel's professional line in their 13th NUC Generation and the successor to the 12th Gen Wall Street Canyon. This system is intended for professional use cases and has significant enhancements for your homelab running ESXi. As known since the 11th Generation, it has an expansion bay that allows you to install a second network adapter.

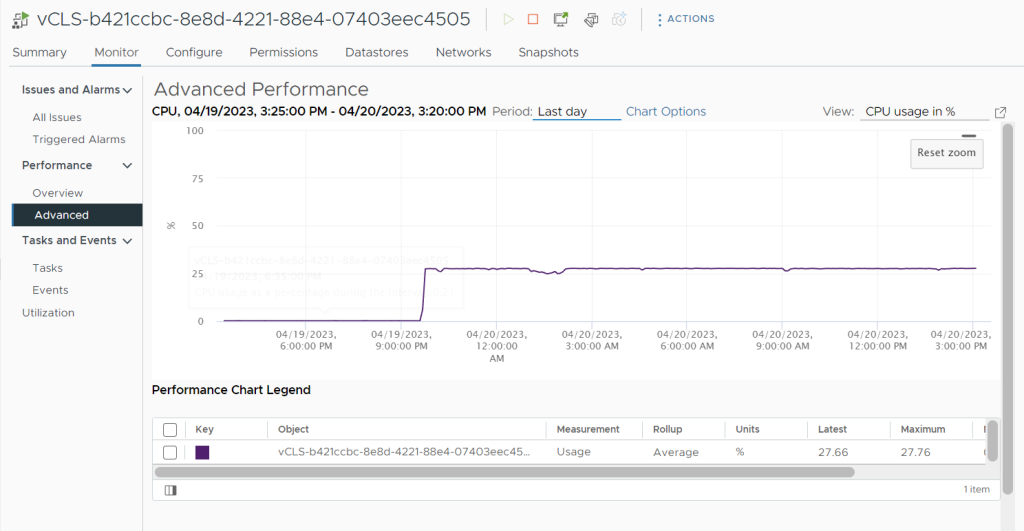

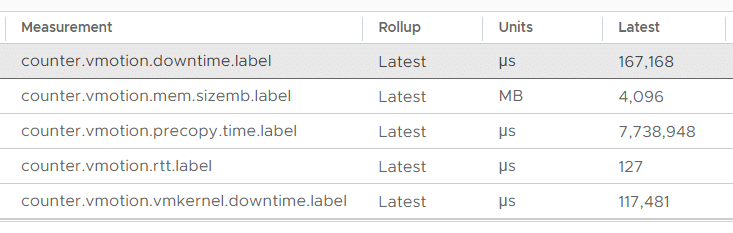

This is a list of all performance metrics that are available in vSphere vCenter Server 8.0. Performance counters can be viewed for Virtual Machines, Hosts, Clusters, Resource Pools, and other objects by opening Monitor > Performance > Advanced in the vSphere Client.

This is a list of all performance metrics that are available in vSphere vCenter Server 8.0. Performance counters can be viewed for Virtual Machines, Hosts, Clusters, Resource Pools, and other objects by opening Monitor > Performance > Advanced in the vSphere Client.