VMware ESXi 7.0 Update 3 on Intel NUC

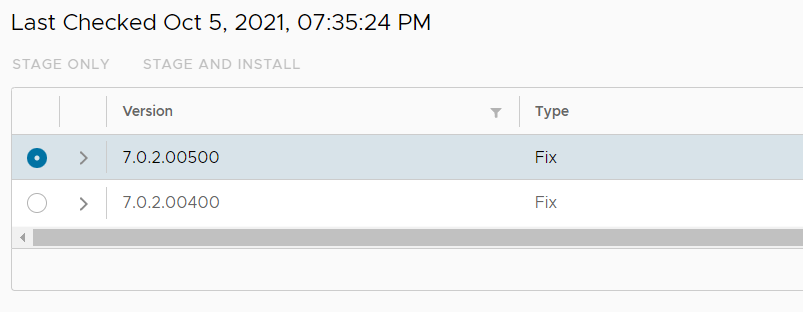

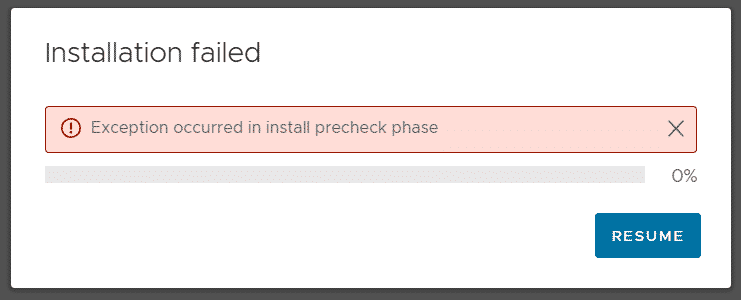

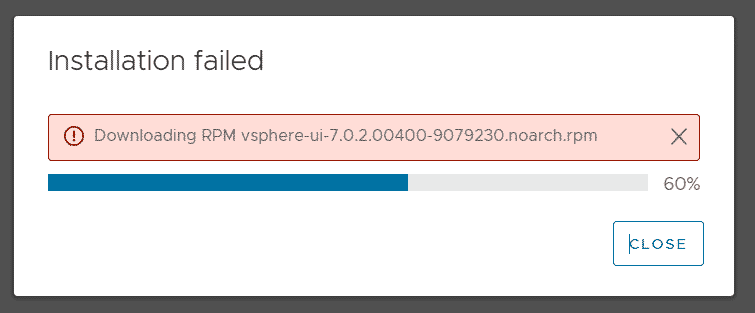

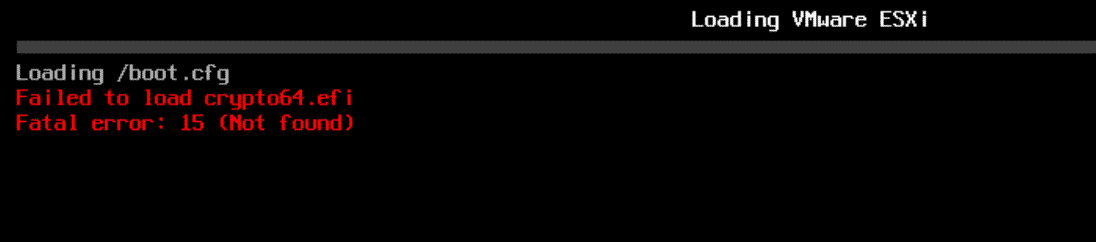

VMware vSphere ESXi 7.0 Update 3 has been released in October and before you start to deploy it to production, you want to evaluate it in your testing environment or homelab. If you have Intel NUCs or similar hardware you should be very careful when updating to new ESXi releases as there might be issues. Please always keep in mind that this is not an officially supported platform and there might be compatibility issues.

VMware vSphere ESXi 7.0 Update 3 has been released in October and before you start to deploy it to production, you want to evaluate it in your testing environment or homelab. If you have Intel NUCs or similar hardware you should be very careful when updating to new ESXi releases as there might be issues. Please always keep in mind that this is not an officially supported platform and there might be compatibility issues.

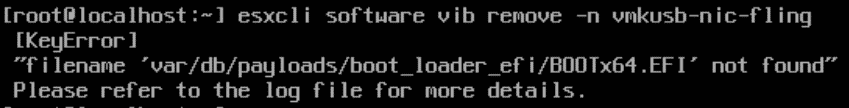

In vSphere 7.0, there are ups and downs with consumer-grade network adapters. Since the deprecation of VMKlinux drivers, there is no option to use Realtek-based NICs, and previous versions had problems with the ne1000 driver. Luckily there is the great Community Networking Driver for ESXi Fling that adds support for a bunch of network cards and VMKUSB-NIC-FLING always covers your back.

I've updated my NUC portfolio to check which NUCs are safe to update and what considerations you have to take before installing the update. Additionally, I'm taking a look at the consequences of the recently deprecated USB/SD-Card usage for ESXi Installations and some general Issues in 7.0u3.

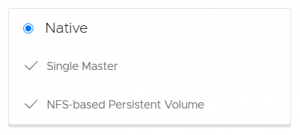

With the release of Cloud Director 10.2, the Container Service Extension 3.0 has been released. With CSE 3.0 you can extend your cloud offering by providing Kubernetes as a Service. Customers can create and manage their own K8s clusters directly in the VMware Cloud Director portal.

With the release of Cloud Director 10.2, the Container Service Extension 3.0 has been released. With CSE 3.0 you can extend your cloud offering by providing Kubernetes as a Service. Customers can create and manage their own K8s clusters directly in the VMware Cloud Director portal. When you are running an ESXi based homelab, you might have considered using vSAN as the storage technology of choice. Hyperconverged storages are a growing alternative to SAN-based systems in virtual environments, so using them at home will help to improve your skillset with that technology.

When you are running an ESXi based homelab, you might have considered using vSAN as the storage technology of choice. Hyperconverged storages are a growing alternative to SAN-based systems in virtual environments, so using them at home will help to improve your skillset with that technology. This is a list of all available performance metrics that are available in vSphere vCenter Server 7.0. Performance counters can be views for Virtual Machines, Hosts, Clusters, Resource Pools, and other objects by opening Monitor > Performance in the vSphere Client.

This is a list of all available performance metrics that are available in vSphere vCenter Server 7.0. Performance counters can be views for Virtual Machines, Hosts, Clusters, Resource Pools, and other objects by opening Monitor > Performance in the vSphere Client.

The

The