Direct Org Network to TKC Network Communication in Cloud Director 10.2

Since VMware has introduced vSphere with Tanzu support in VMware Cloud Director 10.2, I'm struggling to find a proper way to implement a solution that allows customers bidirectional communication between Virtual Machines and Pods. In earlier Kubernetes implementations using Container Service Extension (CSE) "Native Cluster", workers and the control plane were directly placed in Organization networks. Communication between Pods and Virtual Machines was quite easy, even if they were placed in different subnets because they could be routed through the Tier1 Gateway.

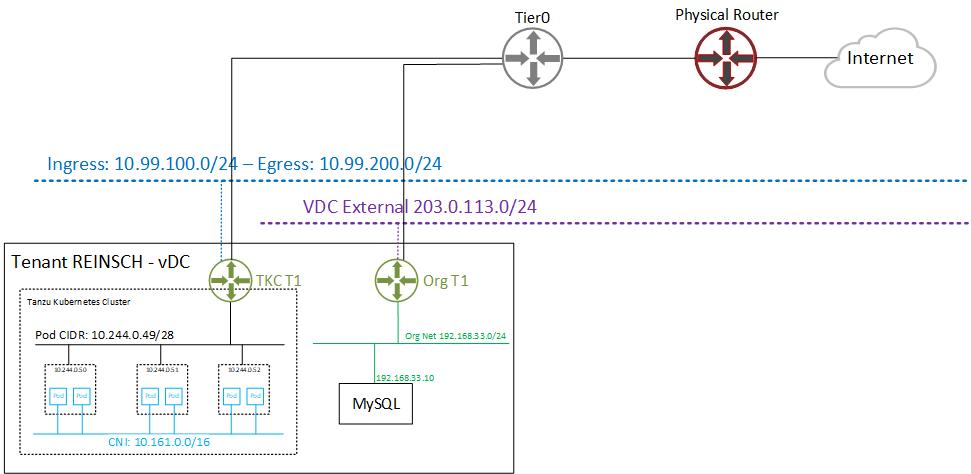

With Tanzu meeting VMware Cloud Director, Kubernetes Clusters have their own Tier1 Gateway. While it would be technically possible to implement routing between Tanzu and VCD Tier1s through Tier0, the typical Cloud Director Org Network is hidden behind a NAT. There is just no way to prevent overlapping networks when advertising Tier1 Routers to the upstream Tier0. The following diagram shows the VCD networking with Tanzu enabled.

With Cloud Director 10.2.2, VMware further optimized the implementation by automatically setting up Firewall Rules on the TKC Tier1 to only allow the tenants Org Networks to access Kubernetes services. They also published a guide on how customers could NAT their public IP addresses to TKC Ingress addressed to make them accessible from the Internet. The method is described here (see Publish Kubernetes Services using VCD Org Networks). Unfortunately, the need to communicate from Pods to Virtual Machines in VCD seems still not to be in VMware's scope.

While developing a decent solution by using Kubernetes Endpoints, I came up with a questionable workaround. While I highly doubt that these methods are supported and useful in production, I still want to share them, to show what actually could be possible.

Read More »Direct Org Network to TKC Network Communication in Cloud Director 10.2

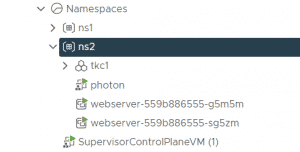

This article explains how you can create Virtual Machines in Kubernetes Namespaces in vSphere with Tanzu. The deployment of Virtual Machines in Kubernetes namespaces using kubectl was shown in demonstrations but is currently (as of vSphere 7.0 U2) not supported. Only with third-party integrations like TKG, it is possible to create Virtual Machines by leveraging the vmoperator.

This article explains how you can create Virtual Machines in Kubernetes Namespaces in vSphere with Tanzu. The deployment of Virtual Machines in Kubernetes namespaces using kubectl was shown in demonstrations but is currently (as of vSphere 7.0 U2) not supported. Only with third-party integrations like TKG, it is possible to create Virtual Machines by leveraging the vmoperator.

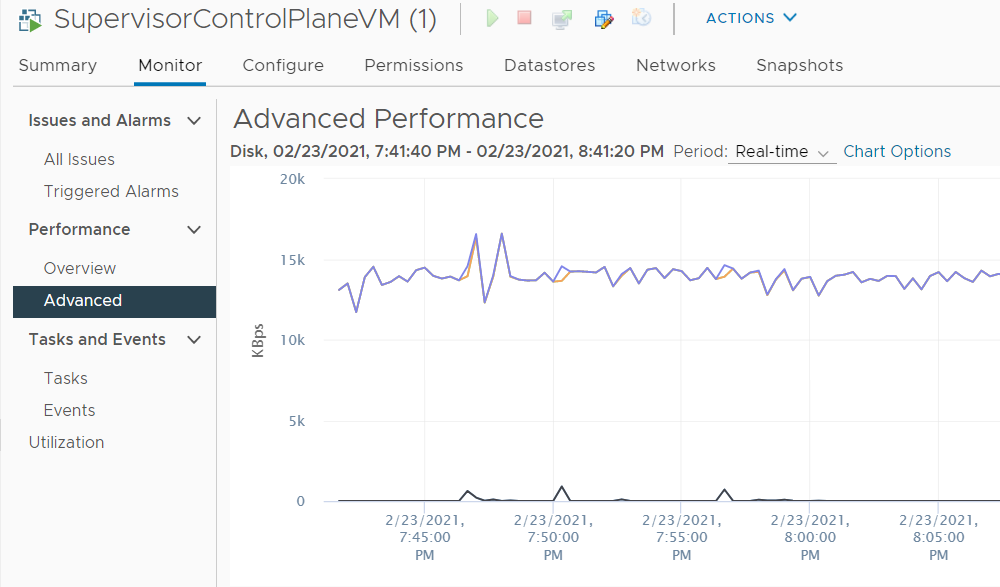

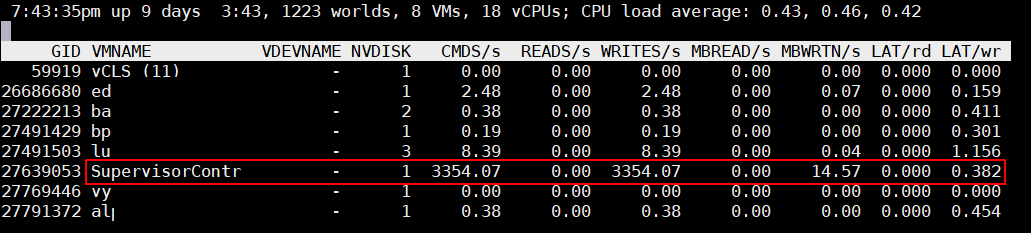

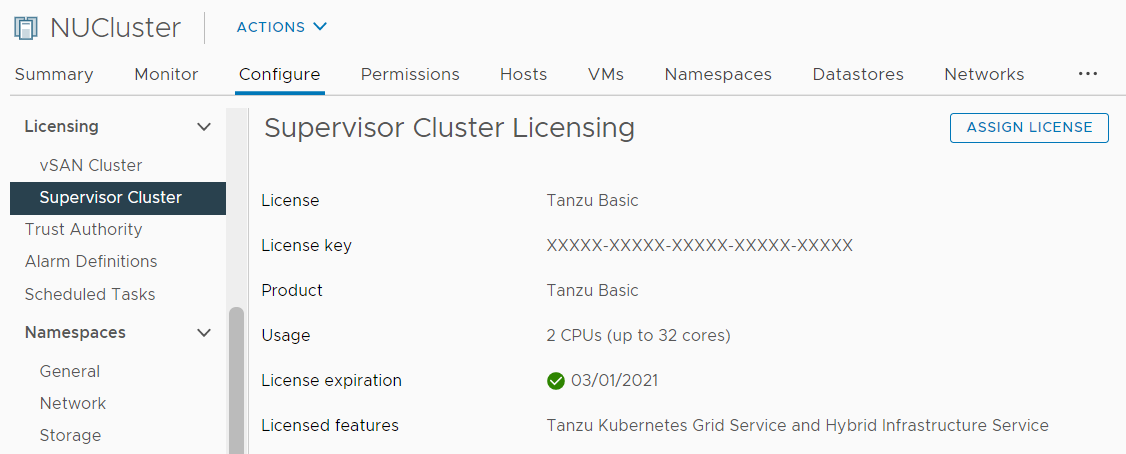

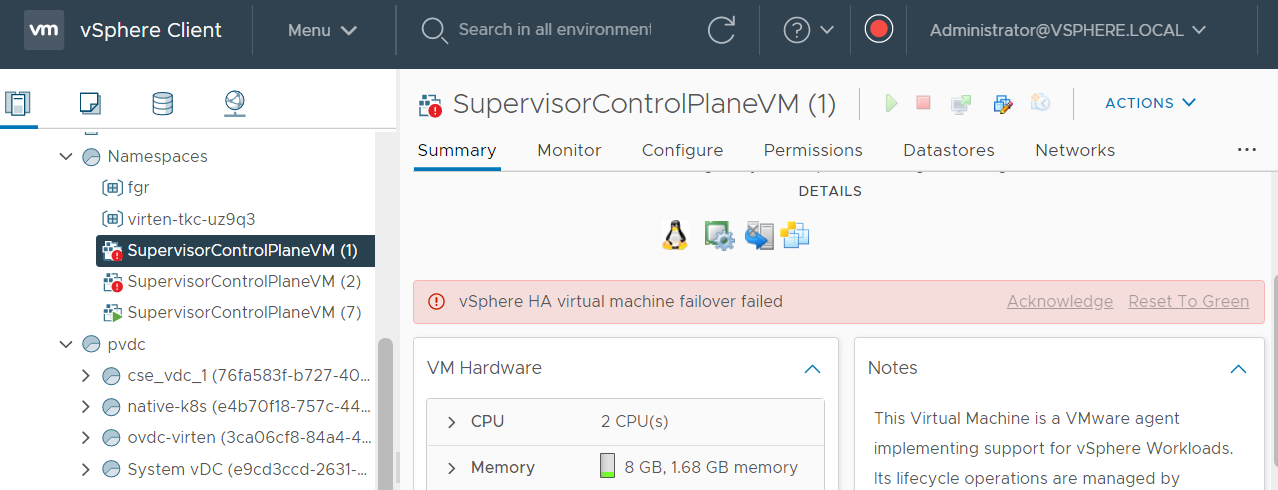

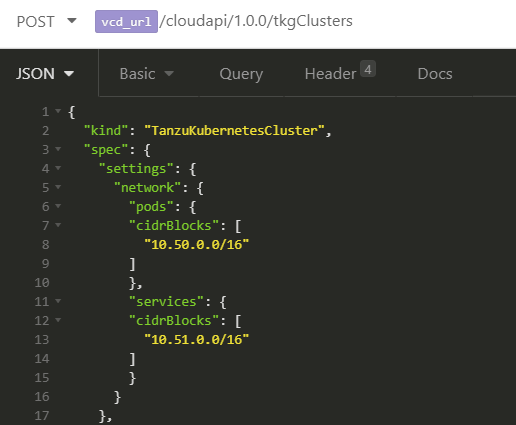

A major problem when deploying "vSphere with Tanzu" Clusters in VMware Cloud Director 10.2 is that the defaults for TKG Clusters are overlapping with the defaults for the Supervisor Cluster configured in vCenter Server during the Workload Management enablement.

A major problem when deploying "vSphere with Tanzu" Clusters in VMware Cloud Director 10.2 is that the defaults for TKG Clusters are overlapping with the defaults for the Supervisor Cluster configured in vCenter Server during the Workload Management enablement.