Part 3 of the "Manage VSAN with RVC" series explains commands related to VSAN objects. This commands provide an insight on how VSAN works. You can identify the status of objects and where they are located:

- vsan.disks_info

- vsan.disks_stats

- vsan.cmmds_find

- vsan.vm_object_info

- vsan.disk_object_info

- vsan.object_info

- vsan.object_reconfigure

To make commands look better I created marks for a Cluster, a Virtual Machine and an ESXi Hosts. This allows me to use ~cluster, ~vm and ~esx in my examples:

/localhost/DC> mark cluster ~/computers/VSAN-Cluster/ /localhost/DC> mark vm ~/vms/vma.virten.lab /localhost/DC> mark esx ~/computers/VSAN-Cluster/hosts/esx1.virten.lab/

Object Management

vsan.disks_info

Prints informations about all physical disks from an host. This command helps to identify backend disks and their state. It also provides hints on why physical disks are ineligible for VSAN. This command retains information about:

- Disk Display Name

- Disk type (SSD or MD)

- RAW Size

- State (inUse, eligible or ineligible)

- Existing partitions

Example 1 - Print host disk information

/localhost/DC> vsan.disks_info ~esx Disks on host esx1.virten.lab: +-------------------------------------+-------+--------+----------------------------------------+ | DisplayName | isSSD | Size | State | +-------------------------------------+-------+--------+----------------------------------------+ | WDC_WD3000HLFS | MD | 279 GB | inUse | | ATA WDC WD3000HLFS-0 | | | | +-------------------------------------+-------+--------+----------------------------------------+ | WDC_WD3000HLFS | MD | 279 GB | inUse | | ATA WDC WD3000HLFS-0 | | | | +-------------------------------------+-------+--------+----------------------------------------+ | WDC_WD3000HLFS | MD | 279 GB | inUse | | ATA WDC WD3000HLFS-0 | | | | +-------------------------------------+-------+--------+----------------------------------------+ | SanDisk_SDSSDP064G | SSD | 59 GB | inUse | | ATA SanDisk SDSSDP06 | | | | +-------------------------------------+-------+--------+----------------------------------------+ | Local USB (mpx.vmhba32:C0:T0:L0) | MD | 7 GB | ineligible (Existing partitions found | | USB DISK 2.0 | | | on disk 'mpx.vmhba32:C0:T0:L0'.) | | | | | | | | | | Partition table: | | | | | 5: 0.24 GB, type = vfat | | | | | 6: 0.24 GB, type = vfat | | | | | 7: 0.11 GB, type = coredump | | | | | 8: 0.28 GB, type = vfat | +-------------------------------------+-------+--------+----------------------------------------+

vsan.disks_stats

Prints stats on all disks in VSAN. Can be used against a host or the whole cluster. When used against a host, it does only resolves names from the given host. I would suggest to run this command against clusters only. That displays names for all disks and hosts in the VSAN. It is very helpfull when you are troubleshooting disk full errors. The command retains information about:

- Disk Display Name

- Disk Size

- Disk type (SSD or MD)

- Number of Components

- Capacity

- Used/Reserved percentage

- Health Status

The command has 2 options: --compute-number-of-components (-c) and --show-iops (-s). Both options are deprecated. The number of components is always displayed.

Example 1 - Print VSAN Disk Stats:

/localhost/DC> vsan.disks_stats ~cluster +---------------------------------+-----------------+-------+------+-----------+------+----------+--------+ | | | | Num | Capacity | | | Status | | DisplayName | Host | isSSD | Comp | Total | Used | Reserved | Health | +---------------------------------+-----------------+-------+------+-----------+------+----------+--------+ | t10.ATA_____SanDisk_SDSSDP064G | esx1.virten.lab | SSD | 0 | 41.74 GB | 0 % | 0 % | OK | | t10.ATA_____WDC_WD3000HLFS | esx1.virten.lab | MD | 30 | 279.25 GB | 43 % | 42 % | OK | | t10.ATA_____WDC_WD3000HLFS | esx1.virten.lab | MD | 32 | 279.25 GB | 41 % | 40 % | OK | | t10.ATA_____WDC_WD3000HLFS | esx1.virten.lab | MD | 24 | 279.25 GB | 33 % | 32 % | OK | +---------------------------------+-----------------+-------+------+-----------+------+----------+--------+ | t10.ATA_____SanDisk_SDSSDP064G | esx2.virten.lab | SSD | 0 | 41.74 GB | 0 % | 0 % | OK | | t10.ATA_____WDC_WD3000HLFS | esx2.virten.lab | MD | 32 | 279.25 GB | 44 % | 44 % | OK | | t10.ATA_____WDC_WD3000HLFS | esx2.virten.lab | MD | 34 | 279.25 GB | 46 % | 44 % | OK | | t10.ATA_____WDC_WD3000HLFS | esx2.virten.lab | MD | 28 | 279.25 GB | 33 % | 32 % | OK | +---------------------------------+-----------------+-------+------+-----------+------+----------+--------+ | t10.ATA_____SanDisk_SDSSDP064G | esx3.virten.lab | SSD | 0 | 41.74 GB | 0 % | 0 % | OK | | t10.ATA_____WDC_WD3000HLFS | esx3.virten.lab | MD | 35 | 279.25 GB | 42 % | 42 % | OK | | t10.ATA_____WDC_WD3000HLFS | esx3.virten.lab | MD | 33 | 279.25 GB | 43 % | 42 % | OK | | t10.ATA_____WDC_WD3000HLFS | esx3.virten.lab | MD | 22 | 279.25 GB | 36 % | 34 % | OK | +---------------------------------+-----------------+-------+------+-----------+------+----------+--------+ | t10.ATA_____SanDisk_SDSSDP064G | esx4.virten.lab | SSD | 0 | 41.74 GB | 0 % | 0 % | OK | | t10.ATA_____WDC_WD3000HLFS | esx4.virten.lab | MD | 34 | 279.25 GB | 44 % | 43 % | OK | | t10.ATA_____WDC_WD3000HLFS | esx4.virten.lab | MD | 33 | 279.25 GB | 45 % | 42 % | OK | | t10.ATA_____WDC_WD3000HLFS | esx4.virten.lab | MD | 21 | 279.25 GB | 36 % | 32 % | OK | +---------------------------------+-----------------+-------+------+-----------+------+----------+--------+

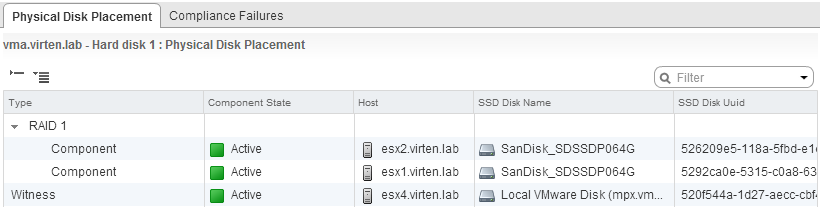

vsan.vm_object_info

Prints VSAN object information about a VM. This command is the equivalent to the Manage > VM Storage Policies tab in the vSphere Web Client and allows you to idenify where stripes, mirrors and witness of virtual disks are located. The command retains information about:

- Namespace directory (Virtual Machine home directory)

- Disk backing (Virtual Disks)

- Number of objects (DOM Objects)

- UUID from objects and components (usful for other commands)

- Location of object stripes and mirrors

- Location of object witness

- Storage Policy (hostFailuresToTolerate, forceProvisioning, stripeWidth, etc.)

- Resync Status

Example 1 - Print VM object Information:

/localhost/DC> vsan.vm_object_info ~vm

VM vma.virten.lab:

Namespace directory

DOM Object: f3bfa952-175f-4d7e-1eae-001b2193b3b0 (owner: esx2.virten.lab, policy: hostFailuresToTolerate = 1, stripeWidth = 1, spbmProfileId = fbd74e7a-2bf9-481d-88b6-22c0abbc8898, proportionalCapacity = [0, 100], spbmProfileGenerationNumber = 1)

Witness: eb16af52-dd3f-b6c4-19f6-001b2193b3b0 (state: ACTIVE (5), host: esx1.virten.lab, md: t10.ATA_____WD3000HLFS, ssd: t10.ATA_____SanDisk_SDSSDP064G)

Witness: eb16af52-1865-b3c4-3abd-001b2193b3b0 (state: ACTIVE (5), host: esx2.virten.lab, md: t10.ATA_____WD3000HLFS, ssd: t10.ATA_____SanDisk_SDSSDP064G)

Witness: eb16af52-805a-b5c4-cf5d-001b2193b3b0 (state: ACTIVE (5), host: esx1.virten.lab, md: t10.ATA_____WD3000HLFS, ssd: t10.ATA_____SanDisk_SDSSDP064G)

RAID_1

RAID_0

Component: 2c16af52-89fa-db48-09f8-001b2193b3b0 (state: ACTIVE (5), host: esx4.virten.lab, md: t10.ATA_____WD3000HLFS, ssd: t10.ATA_____SanDisk_SDSSDP064G)

Component: 2c16af52-6f1f-da48-5c92-001b2193b3b0 (state: ACTIVE (5), host: esx4.virten.lab, md: t10.ATA_____WD3000HLFS, ssd: t10.ATA_____SanDisk_SDSSDP064G)

RAID_0

Component: f3bfa952-5d7b-f4ba-190f-001b2193b3b0 (state: ACTIVE (5), host: esx2.virten.lab, md: t10.ATA_____WD3000HLFS, ssd: t10.ATA_____SanDisk_SDSSDP064G)

Component: f3bfa952-958b-f3ba-5648-001b2193b3b0 (state: ACTIVE (5), host: esx1.virten.lab, md: t10.ATA_____WD3000HLFS, ssd: t10.ATA_____SanDisk_SDSSDP064G)

Disk backing: [vsanDatastore] f3bfa952-175f-4d7e-1eae-001b2193b3b0/vma.virten.lab.vmdk

DOM Object: fabfa952-721d-fc1f-82d3-001b2193b3b0 (owner: esx2.virten.lab, policy: spbmProfileGenerationNumber = 1, hostFailuresToTolerate = 1, spbmProfileId = fbd74e7a-2bf9-481d-88b6-22c0abbc8898)

Witness: e916af52-075e-9740-f1c7-001b2193b3b0 (state: ACTIVE (5), host: esx4.virten.lab, md: t10.ATA_____WD3000HLFS, ssd: t10.ATA_____SanDisk_SDSSDP064G)

RAID_1

Component: f015af52-7c95-0d57-d7b3-001b2193b3b0 (state: ACTIVE (5), host: esx1.virten.lab, md: t10.ATA_____WD3000HLFS, ssd: t10.ATA_____SanDisk_SDSSDP064G, dataToSync: 0.00 GB)

Component: fabfa952-aa57-d02c-9204-001b2193b3b0 (state: ACTIVE (5), host: esx2.virten.lab, md: t10.ATA_____WD3000HLFS, ssd: t10.ATA_____SanDisk_SDSSDP064G)

What we do see here is a virtual machine with one virtual disk. That are 2 DOM Objects - The Namespace directory and the virtual disk.

vsan.cmmds_find

A powerful command to find objects and detailed object information. Can be used against hosts or clusters. Usage against clusters is recommended to resolve UUID into readable names.

Usage from help page:

/localhost/DC> help vsan.cmmds_find

usage: cmmds_find [opts] cluster_or_host

CMMDS Find

cluster_or_host: Path to a ClusterComputeResource or HostSystem

--type, -t: CMMDS type, e.g. DOM_OBJECT, LSOM_OBJECT, POLICY, DISK etc.

--uuid, -u: UUID of the entry.

--owner, -o: UUID of the owning node.

--help, -h: Show this message

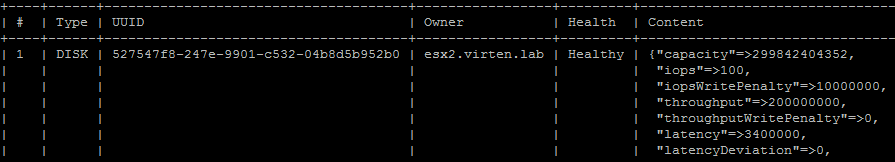

Example 1 - List all Disks in VSAN:

/localhost/DC> vsan.cmmds_find ~cluster -t DISK

+----+------+--------------------------------------+-----------------+---------+-------------------------------------------------------+

| # | Type | UUID | Owner | Health | Content |

+----+------+--------------------------------------+-----------------+---------+-------------------------------------------------------+

| 1 | DISK | 527547f8-247e-9901-c532-04b8d5b952b0 | esx2.virten.lab | Healthy | {"capacity"=>299842404352, |

| | | | | | "iops"=>100, |

| | | | | | "iopsWritePenalty"=>10000000, |

| | | | | | "throughput"=>200000000, |

| | | | | | "throughputWritePenalty"=>0, |

| | | | | | "latency"=>3400000, |

| | | | | | "latencyDeviation"=>0, |

| | | | | | "reliabilityBase"=>10, |

| | | | | | "reliabilityExponent"=>15, |

| | | | | | "mtbf"=>1600000, |

| | | | | | "l2CacheCapacity"=>0, |

| | | | | | "l1CacheCapacity"=>16777216, |

| | | | | | "isSsd"=>0, |

| | | | | | "ssdUuid"=>"526209e5-118a-5fbd-e1d0-0bdd816b32eb", |

| | | | | | "volumeName"=>"52a9bf13-6b13325f-e4da-001b2193b3b0"} |

| 2 | DISK | 526e0510-6461-3410-5be0-0d9ec1b1495f | esx4.virten.lab | Healthy | {"capacity"=>53418655744, |

| | | | | | "iops"=>100, |

| | | | | | "iopsWritePenalty"=>10000000, |

| | | | | | "throughput"=>200000000, |

| | | | | | "throughputWritePenalty"=>0, |

| | | | | | "latency"=>3400000, |

| | | | | | "latencyDeviation"=>0, |

| | | | | | "reliabilityBase"=>10, |

| | | | | | "reliabilityExponent"=>15, |

| | | | | | "mtbf"=>1600000, |

| | | | | | "l2CacheCapacity"=>0, |

| | | | | | "l1CacheCapacity"=>16777216, |

| | | | | | "isSsd"=>0, |

| | | | | | "ssdUuid"=>"520f544a-1d27-aecc-cbf4-2f170e4bf0f8", |

| | | | | | "volumeName"=>"52af1537-3d763738-2c93-005056bb3032"} |

[...]

Example 2 - List all disks from a specific ESXi Host. Identify the hosts UUID (Node UUID) with vsan.host_info:

/localhost/DC> vsan.host_info ~esx

VSAN enabled: yes

Cluster info:

Cluster role: agent

Cluster UUID: 525d9c62-1b87-5577-f76d-6f6d7bb4ba34

Node UUID: 52a61733-bcf1-4c92-6436-001b2193b9a4

Member UUIDs: ["52785959-6bee-21e0-e664-eca86bf99b3f", "52a61831-6060-22d7-c23d-001b2193b3b0", "52a61733-bcf1-4c92-6436-001b2193b9a4", "52aaffa8-d345-3a75-6809-005056bb3032"] (4)

Storage info:

Auto claim: no

Disk Mappings:

SSD: SanDisk_SDSSDP064G - 59 GB

MD: WDC_WD1500HLFS2D01G6U3 - 139 GB

MD: WDC_WD1500ADFD2D00NLR1 - 139 GB

MD: VB0250EAVER - 232 GB

NetworkInfo:

Adapter: vmk1 (192.168.222.21)

/localhost/DC> vsan.cmmds_find ~cluster -t DISK -o 52a61733-bcf1-4c92-6436-001b2193b9a4

+---+------+--------------------------------------+-----------------+---------+-------------------------------------------------------+

| # | Type | UUID | Owner | Health | Content |

+---+------+--------------------------------------+-----------------+---------+-------------------------------------------------------+

| 1 | DISK | 52f853e4-7eca-2e9c-b157-843aefcde6dd | esx1.virten.lab | Healthy | {"capacity"=>149786984448, |

| | | | | | "iops"=>100, |

| | | | | | "iopsWritePenalty"=>10000000, |

| | | | | | "throughput"=>200000000, |

| | | | | | "throughputWritePenalty"=>0, |

| | | | | | "latency"=>3400000, |

| | | | | | "latencyDeviation"=>0, |

| | | | | | "reliabilityBase"=>10, |

| | | | | | "reliabilityExponent"=>15, |

| | | | | | "mtbf"=>1600000, |

| | | | | | "l2CacheCapacity"=>0, |

| | | | | | "l1CacheCapacity"=>16777216, |

| | | | | | "isSsd"=>0, |

| | | | | | "ssdUuid"=>"5292ca0e-5315-c0a8-6396-bd7d93a92d92", |

| | | | | | "volumeName"=>"52a9befe-6a2ccbfe-9d16-001b2193b9a4"} |

| 2 | DISK | 5292ca0e-5315-c0a8-6396-bd7d93a92d92 | esx1.virten.lab | Healthy | {"capacity"=>44812992512, |

| | | | | | "iops"=>20000, |

| | | | | | "iopsWritePenalty"=>10000000, |

| | | | | | "throughput"=>200000000, |

| | | | | | "throughputWritePenalty"=>0, |

| | | | | | "latency"=>3400000, |

| | | | | | "latencyDeviation"=>0, |

| | | | | | "reliabilityBase"=>10, |

| | | | | | "reliabilityExponent"=>15, |

| | | | | | "mtbf"=>2000000, |

| | | | | | "l2CacheCapacity"=>0, |

| | | | | | "l1CacheCapacity"=>16777216, |

| | | | | | "isSsd"=>1, |

| | | | | | "ssdUuid"=>"5292ca0e-5315-c0a8-6396-bd7d93a92d92", |

| | | | | | "volumeName"=>"NA"} |

| 3 | DISK | 5287fb1c-4cfc-04e0-6a22-281b4c259a1c | esx1.virten.lab | Healthy | {"capacity"=>249913409536, |

| | | | | | "iops"=>100, |

| | | | | | "iopsWritePenalty"=>10000000, |

[...]

Example 3 - List DOM Objects from a specific ESXi Host:

/localhost/DC> vsan.cmmds_find ~cluster -t DOM_OBJECT -o 52a61733-bcf1-4c92-6436-001b2193b9a4

[...]

| 16 | DOM_OBJECT | 7e62c152-7dfb-c6e5-07b8-001b2193b9a4 | esx1.virten.lab | Healthy | {"type"=>"Configuration", |

| | | | | | "attributes"=> |

| | | | | | {"CSN"=>3, |

| | | | | | "addressSpace"=>268435456, |

| | | | | | "compositeUuid"=>"7e62c152-7dfb-c6e5-07b8-001b2193b9a4"}, |

| | | | | | "child-1"=> |

| | | | | | {"type"=>"RAID_1", |

| | | | | | "attributes"=>{}, |

| | | | | | "child-1"=> |

| | | | | | {"type"=>"Component", |

| | | | | | "attributes"=> |

| | | | | | {"capacity"=>268435456, |

| | | | | | "addressSpace"=>268435456, |

| | | | | | "componentState"=>5, |

| | | | | | "componentStateTS"=>1388405374, |

| | | | | | "faultDomainId"=>"52a61831-6060-22d7-c23d-001b2193b3b0"}, |

| | | | | | "componentUuid"=>"7e62c152-763d-1400-2b06-001b2193b9a4", |

| | | | | | "diskUuid"=>"527547f8-247e-9901-c532-04b8d5b952b0"}, |

| | | | | | "child-2"=> |

| | | | | | {"type"=>"Component", |

| | | | | | "attributes"=> |

| | | | | | {"capacity"=>268435456, |

| | | | | | "addressSpace"=>268435456, |

| | | | | | "componentState"=>5, |

| | | | | | "componentStateTS"=>1388590529, |

| | | | | | "staleLsn"=>0, |

| | | | | | "faultDomainId"=>"52a61733-bcf1-4c92-6436-001b2193b9a4"}, |

| | | | | | "componentUuid"=>"c135c452-2f04-0533-dbbc-001b2193b9a4", |

| | | | | | "diskUuid"=>"52f853e4-7eca-2e9c-b157-843aefcde6dd"}}, |

| | | | | | "child-2"=> |

| | | | | | {"type"=>"Witness", |

| | | | | | "attributes"=> |

| | | | | | {"componentState"=>5, |

| | | | | | "isWitness"=>1, |

| | | | | | "faultDomainId"=>"52aaffa8-d345-3a75-6809-005056bb3032"}, |

| | | | | | "componentUuid"=>"c135c452-cd77-0733-1708-001b2193b9a4", |

| | | | | | "diskUuid"=>"526e0510-6461-3410-5be0-0d9ec1b1495f"}} |

[...]

Example 4 - List LSOM Objects (Components) from a specific ESXi Host:

/localhost/DC> vsan.cmmds_find ~cluster -t LSOM_OBJECT -o 52a61733-bcf1-4c92-6436-001b2193b9a4

[...]

| 95 | LSOM_OBJECT | 2739c452-a2cf-e9ed-9d07-eca86bf99b3f | esx1.virten.lab | Healthy | {"diskUuid"=>"52bc69ac-35ad-c2f7-bab8-2ecf298cd4e5", |

| | | | | | "compositeUuid"=>"1d61c152-d60c-6a95-5470-eca86bf99b3f", |

| | | | | | "capacityUsed"=>17181966336, |

| | | | | | "physCapacityUsed"=>0} |

| 96 | LSOM_OBJECT | 895fc152-391f-c4f2-a697-eca86bf99b3f | esx1.virten.lab | Healthy | {"diskUuid"=>"52bc69ac-35ad-c2f7-bab8-2ecf298cd4e5", |

| | | | | | "compositeUuid"=>"895fc152-3056-1cde-3a1b-eca86bf99b3f", |

| | | | | | "capacityUsed"=>53477376, |

| | | | | | "physCapacityUsed"=>53477376} |

| 97 | LSOM_OBJECT | 6537c452-a9b1-f4f5-badb-001b2193b3b0 | esx1.virten.lab | Healthy | {"diskUuid"=>"52f853e4-7eca-2e9c-b157-843aefcde6dd", |

| | | | | | "compositeUuid"=>"ff5fc152-65b6-bc34-ff54-001b2193b9a4", |

| | | | | | "capacityUsed"=>2097152, |

| | | | | | "physCapacityUsed"=>0} |

[...]

vsan.disk_object_info

Prints all objects that are located on a physical disk

Example 1 - Get Disk UUID with vsan.cmmds_find and display all objects located on this disk:

/localhost/DC> vsan.cmmds_find ~cluster -t DISK

+---+------+--------------------------------------+-----------------+---------+-------------------------------------------------------+

| # | Type | UUID | Owner | Health | Content |

+---+------+--------------------------------------+-----------------+---------+-------------------------------------------------------+

| 1 | DISK | 52f853e4-7eca-2e9c-b157-843aefcde6dd | esx1.virten.lab | Healthy | {"capacity"=>149786984448, |

| | | | | | "iops"=>100, |

| | | | | | "iopsWritePenalty"=>10000000, |

| | | | | | "throughput"=>200000000, |

| | | | | | "throughputWritePenalty"=>0, |

| | | | | | "latency"=>3400000, |

| | | | | | "latencyDeviation"=>0, |

| | | | | | "reliabilityBase"=>10, |

| | | | | | "reliabilityExponent"=>15, |

| | | | | | "mtbf"=>1600000, |

| | | | | | "l2CacheCapacity"=>0, |

| | | | | | "l1CacheCapacity"=>16777216, |

| | | | | | "isSsd"=>0, |

| | | | | | "ssdUuid"=>"5292ca0e-5315-c0a8-6396-bd7d93a92d92", |

| | | | | | "volumeName"=>"52a9befe-6a2ccbfe-9d16-001b2193b9a4"} |

[...]

/localhost/DC> vsan.disk_object_info ~cluster 52f853e4-7eca-2e9c-b157-843aefcde6dd

Fetching VSAN disk info from [...] (this may take a moment) ...

Physical disk mpx.vmhba1:C0:T4:L0 (52f853e4-7eca-2e9c-b157-843aefcde6dd):

DOM Object: 27e4bd52-49f6-050e-178b-00505687439c (owner: esx1.virten.lab, policy: hostFailuresToTolerate = 1, stripeWidth = 2, forceProvisioning = 1, proportionalCapacity = 100)

Context: Part of VM vm1: Disk: [vsanDatastore] 5078bd52-2977-8cf9-107c-00505687439c/vm1_1.vmdk

Witness: bae5bd52-54f7-05ab-f4b3-00505687439c (state: ACTIVE (5), host: esx1.virten.lab, md: mpx.vmhba1:C0:T2:L0, ssd: mpx.vmhba1:C0:T1:L0)

Witness: bae5bd52-ebf9-ffaa-cbb4-00505687439c (state: ACTIVE (5), host: esx1.virten.lab, md: mpx.vmhba1:C0:T4:L0, ssd: mpx.vmhba1:C0:T1:L0)

Witness: bae5bd52-e59e-03ab-b080-00505687439c (state: ACTIVE (5), host: esx1.virten.lab, md: **mpx.vmhba1:C0:T4:L0**, ssd: mpx.vmhba1:C0:T1:L0)

RAID_1

RAID_0

Component: abe5bd52-4128-cf28-ef6f-00505687439c (state: ACTIVE (5), host: esx2.virtenlab, md: mpx.vmhba1:C0:T4:L0, ssd: mpx.vmhba1:C0:T1:L0)

Component: abe5bd52-1516-cb28-6e87-00505687439c (state: ACTIVE (5), host: esx2.virtenlab, md: mpx.vmhba1:C0:T2:L0, ssd: mpx.vmhba1:C0:T1:L0)

RAID_0

Component: 27e4bd52-4048-1465-ac76-00505687439c (state: ACTIVE (5), host: esx4.virtenlab, md: **mpx.vmhba1:C0:T4:L0**, ssd: mpx.vmhba1:C0:T1:L0)

Component: 27e4bd52-1d88-1265-22ab-00505687439c (state: ACTIVE (5), host: esx4.virtenlab, md: mpx.vmhba1:C0:T2:L0, ssd: mpx.vmhba1:C0:T1:L0)

DOM Object: 7e78bd52-7595-1716-85a2-005056871792 (owner: esx1.virtenlab, policy: hostFailuresToTolerate = 1, stripeWidth = 2, forceProvisioning = 1, proportionalCapacity = 100)

Context: Part of VM debian: Disk: [vsanDatastore] 6978bd52-4d92-05ed-dad2-005056871792/debian.vmdk

Witness: aee5bd52-2ae2-197b-e67a-005056871792 (state: ACTIVE (5), host: esx4.virtenlab, md: mpx.vmhba1:C0:T4:L0, ssd: mpx.vmhba1:C0:T1:L0)

Witness: aee5bd52-8557-0d7b-3022-005056871792 (state: ACTIVE (5), host: esx2.virtenlab, md: mpx.vmhba1:C0:T4:L0, ssd: mpx.vmhba1:C0:T1:L0)

Witness: aee5bd52-7443-177b-74a8-005056871792 (state: ACTIVE (5), host: esx2.virtenlab, md: mpx.vmhba1:C0:T4:L0, ssd: mpx.vmhba1:C0:T1:L0)

RAID_1

RAID_0

Component: 36debd52-7390-a05d-9225-005056871792 (state: ACTIVE (5), host: esx4.virtenlab, md: mpx.vmhba1:C0:T2:L0, ssd: mpx.vmhba1:C0:T1:L0)

Component: 36debd52-a9b8-965d-03a6-005056871792 (state: ACTIVE (5), host: esx4.virtenlab, md: **mpx.vmhba1:C0:T4:L0**, ssd: mpx.vmhba1:C0:T1:L0)

RAID_0

Component: 7f78bd52-2d59-c558-09f9-005056871792 (state: ACTIVE (5), host: esx3.virtenlab, md: mpx.vmhba1:C0:T2:L0, ssd: mpx.vmhba1:C0:T1:L0)

Component: 7f78bd52-d827-c458-9d94-005056871792 (state: ACTIVE (5), host: esx3.virtenlab, md: mpx.vmhba1:C0:T4:L0, ssd: mpx.vmhba1:C0:T1:L0)

DOM Object: 2ee4bd52-d1af-dd19-626d-00505687439c (owner: esx3.virtenlab, policy: hostFailuresToTolerate = 1, stripeWidth = 2, forceProvisioning = 1, proportionalCapacity = 100)

Context: Part of VM vm1: Disk: [vsanDatastore] 5078bd52-2977-8cf9-107c-00505687439c/vm1_2.vmdk

Witness: bde5bd52-959d-5d8b-6058-00505687439c (state: ACTIVE (5), host: esx3.virtenlab, md: **mpx.vmhba1:C0:T4:L0**, ssd: mpx.vmhba1:C0:T1:L0)

Witness: bde5bd52-4a48-588b-e464-00505687439c (state: ACTIVE (5), host: esx1.virtenlab, md: mpx.vmhba1:C0:T4:L0, ssd: mpx.vmhba1:C0:T1:L0)

[...]

vsan.object_info

Prints informations about physical location and configuration of a DOM Object:

Example 1 - Print physical location of a DOM Object:

/localhost/DC> vsan.object_info ~cluster 7e62c152-7dfb-c6e5-07b8-001b2193b9a4

2014-01-01 18:35:16 +0000: Fetching VSAN disk info from esx1.virten.lab (may take a moment) ...

2014-01-01 18:35:16 +0000: Fetching VSAN disk info from esx4.virten.lab (may take a moment) ...

2014-01-01 18:35:16 +0000: Fetching VSAN disk info from esx2.virten.lab (may take a moment) ...

2014-01-01 18:35:16 +0000: Fetching VSAN disk info from esx3.virten.lab (may take a moment) ...

2014-01-01 18:35:18 +0000: Done fetching VSAN disk infos

DOM Object: 7e62c152-7dfb-c6e5-07b8-001b2193b9a4 (owner: esx1.virten.lab, policy: hostFailuresToTolerate = 1, forceProvisioning = 1, proportionalCapacity = 100)

Witness: c135c452-cd77-0733-1708-001b2193b9a4 (state: ACTIVE (5), host: esx4.virten.lab, md: t10.ATA_____WDC_WD300HLFSD2D03DFC1, ssd: t10.ATA_____SanDisk_SDSSDP064G)

RAID_1

Component: c135c452-2f04-0533-dbbc-001b2193b9a4 (state: ACTIVE (5), host: esx1.virten.lab, md: t10.ATA_____WDC_WD300HLFSD2D00NLR1, ssd: t10.ATA_____SanDisk_SDSSDP064G)

Component: 7e62c152-763d-1400-2b06-001b2193b9a4 (state: ACTIVE (5), host: esx2.virten.lab, md: t10.ATA_____WDC_WD3000HLFS2D01G6U1, ssd: t10.ATA_____SanDisk_SDSSDP064G)

vsan.object_reconfigure

Changes the policy of DOM Objects. To use this command, you need to know the Object UUID which can be found out with vsan.cmmds_find or vsan.vm_object_info. Available policy options are:

- hostFailuresToTolerate (Number of failures to tolerate)

- forceProvisioning (Force provisioning)

- stripeWidth (Number of disk stripes per object)

- cacheReservation (Flash read cache reservation)

- proportionalCapacity (Object space reservation)

Be careful to keep existing policies. Always specify all options. The policy has to be defined in the following format:

'(("hostFailuresToTolerate" i1) ("forceProvisioning" i1))'

Example 1 - Change the disk policy to tolerate 2 host failures.

Current policy is hostFailuresToTolerate = 1, stripeWidth = 1

/localhost/DC> vsan.object_reconfigure ~cluster 5078bd52-2977-8cf9-107c-00505687439c -p '(("hostFailuresToTolerate" i2) ("stripeWidth" i1))'

Example 2 - Disable Force provisioning. Current policy is hostFailuresToTolerate = 1, stripeWidth = 1

/localhost/DC> vsan.object_reconfigure ~cluster 5078bd52-2977-8cf9-107c-00505687439c -p '(("hostFailuresToTolerate" i1) ("stripeWidth" i1) ("forceProvisioning" i0))'

Example 3 - Locate and change VM disk policies

/localhost/DC> vsan.vm_object_info ~vm

VM perf1:

Namespace directory

[...]

Disk backing: [vsanDatastore] 6978bd52-4d92-05ed-dad2-005056871792/vma.virten.lab.vmdk

DOM Object: 7e78bd52-7595-1716-85a2-005056871792 (owner: esx1.virtenlab, policy: hostFailuresToTolerate = 1, stripeWidth = 2, forceProvisioning = 1)

Witness: aee5bd52-7443-177b-74a8-005056871792 (state: ACTIVE (5), host: esx2.virten.lab, md: mpx.vmhba1:C0:T4:L0, ssd: mpx.vmhba1:C0:T1:L0)

RAID_1

RAID_0

Component: 36debd52-7390-a05d-9225-005056871792 (state: ACTIVE (5), esx3.virten.lab, md: mpx.vmhba1:C0:T2:L0, ssd: mpx.vmhba1:C0:T1:L0)

Component: 36debd52-a9b8-965d-03a6-005056871792 (state: ACTIVE (5), esx3.virten.lab, md: mpx.vmhba1:C0:T4:L0, ssd: mpx.vmhba1:C0:T1:L0)

RAID_0

Component: 7f78bd52-2d59-c558-09f9-005056871792 (state: ACTIVE (5), esx1.virten.lab, md: mpx.vmhba1:C0:T2:L0, ssd: mpx.vmhba1:C0:T1:L0)

Component: 7f78bd52-d827-c458-9d94-005056871792 (state: ACTIVE (5), esx1.virten.lab, md: mpx.vmhba1:C0:T4:L0, ssd: mpx.vmhba1:C0:T1:L0)

/localhost/DC> vsan.object_reconfigure ~cluster 7e78bd52-7595-1716-85a2-005056871792 -p '(("hostFailuresToTolerate" i1) ("stripeWidth" i1) ("forceProvisioning" i1))'

Reconfiguring '7e78bd52-7595-1716-85a2-005056871792' to (("hostFailuresToTolerate" i1) ("stripeWidth" i1) ("forceProvisioning" i1))

All reconfigs initiated. Synching operation may be happening in the background

Manage VSAN with RVC Series

- Manage VSAN with RVC Part 1 – Basic Configuration Tasks

- Manage VSAN with RVC Part 2 – VSAN Cluster Administration

- Manage VSAN with RVC Part 3 – Object Management

- Manage VSAN with RVC Part 4 – Troubleshooting

- Manage VSAN with RVC Part 5 – Observer